Searching for "machine learning"

My annotations to the document:

https://hyp.is/go?url=https%3A%2F%2Fmedia-exp1.licdn.com%2Fdms%2Fdocument%2FC561FAQGCEOxjElpYdQ%2Ffeedshare-document-pdf-analyzed%2F0%2F1639993836361%3Fe%3D1640433600%26v%3Dbeta%26t%3DgSSwhduluTdmUfDnG9LDDo1cwS5gkvabzab4IiuX8ac&group=__world__

++++++++++++++++

more on machine learning in this IMS blog

https://blog.stcloudstate.edu/ims?s=machine+learning

https://www.mckinsey.com/business-functions/mckinsey-analytics/our-insights/an-executives-guide-to-ai

How Machine Learning and the Cloud Can Rescue IT From the Plumbing Business

FROM AMAZON WEB SERVICES (AWS)

https://www.edsurge.com/news/2019-02-19-how-machine-learning-and-the-cloud-can-rescue-it-from-the-plumbing-business

Many educational institutions maintain their own data centers. “We need to minimize the amount of work we do to keep systems up and running, and spend more energy innovating on things that matter to people.”

what’s the difference between machine learning (ML) and artificial intelligence (AI)?

Jeff Olson: That’s actually the setup for a joke going around the data science community. The punchline? If it’s written in Python or R, it’s machine learning. If it’s written in PowerPoint, it’s AI.

machine learning is in practical use in a lot of places, whereas AI conjures up all these fantastic thoughts in people.

What is serverless architecture, and why are you excited about it?

Instead of having a machine running all the time, you just run the code necessary to do what you want—there is no persisting server or container. There is only this fleeting moment when the code is being executed. It’s called Function as a Service, and AWS pioneered it with a service called AWS Lambda. It allows an organization to scale up without planning ahead.

How do you think machine learning and Function as a Service will impact higher education in general?

The radical nature of this innovation will make a lot of systems that were built five or 10 years ago obsolete. Once an organization comes to grips with Function as a Service (FaaS) as a concept, it’s a pretty simple step for that institution to stop doing its own plumbing. FaaS will help accelerate innovation in education because of the API economy.

If the campus IT department will no longer be taking care of the plumbing, what will its role be?

I think IT will be curating the inter-operation of services, some developed locally but most purchased from the API economy.

As a result, you write far less code and have fewer security risks, so you can innovate faster. A succinct machine-learning algorithm with fewer than 500 lines of code can now replace an application that might have required millions of lines of code. Second, it scales. If you happen to have a gigantic spike in traffic, it deals with it effortlessly. If you have very little traffic, you incur a negligible cost.

++++++++

more on machine learning in this IMS blog

https://blog.stcloudstate.edu/ims?s=machine+learning

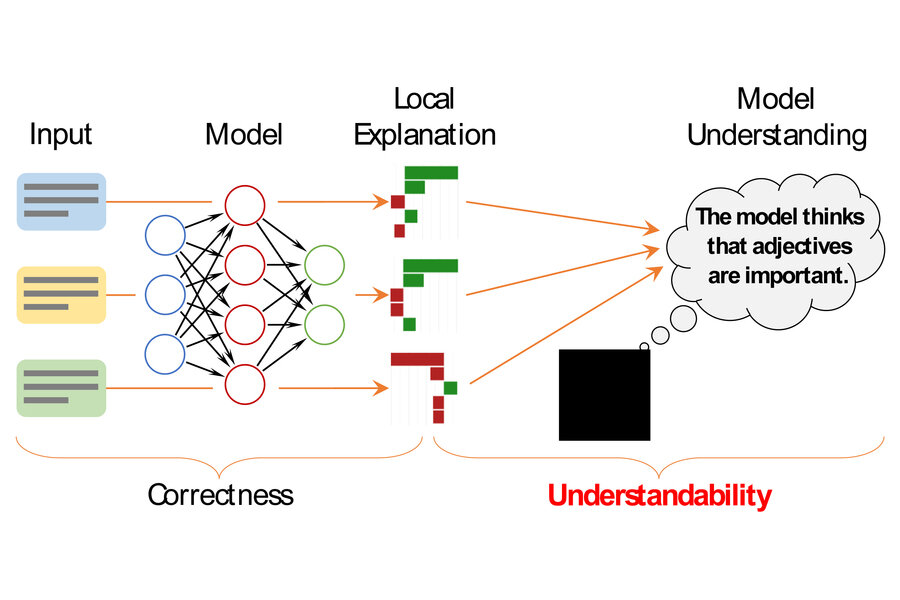

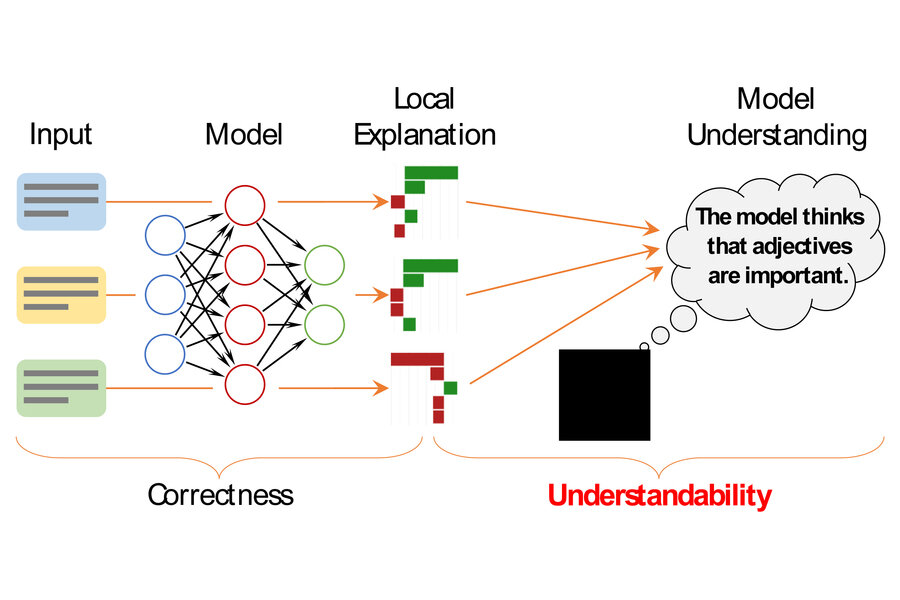

Framework to describe individual machine-learning model decisions

https://techxplore.com/news/2022-05-framework-individual-machine-learning-decisions.html

++++++++++++++++++++++

more on machine learning in this IMS blog

https://blog.stcloudstate.edu/ims?s=machine+learning

Project centers on artificial intelligence; new National Institute in AI to be headquartered at Georgia Tech

https://gra.org/blog/209

“The goal of ALOE is to develop new artificial intelligence theories and techniques to make online education for adults at least as effective as in-person education in STEM fields,” says Co-PI Ashok Goel, Professor of Computer Science and Human-Centered Computing and the Chief Scientist with the Center for 21stCentury Universities at Georgia Tech

Research and development at ALOE aims to blend online educational resources and courses to make education more widely available, as well as use virtual assistants to make it more affordable and achievable. According to Goel, ALOE will make fundamental advances in personalization at scale, machine teaching, mutual theory of mind and responsible AI.

The ALOE Institute represents a powerful consortium of several universities (Arizona State, Drexel, Georgia Tech, Georgia State, Harvard, UNC-Greensboro); technical colleges in TCSG; major industrial partners (Boeing, IBM and Wiley); and non-profit organizations (GRA and IMS).

+++++++++++++++++++++++

more on AI in this IMS blog

https://blog.stcloudstate.edu/ims?s=artificial+intelligence

https://blog.stcloudstate.edu/ims?s=online+education

Why online HE should be about learning, not teaching

https://www.universityworldnews.com/post.php?story=20210126142422302

The gate is now wide open

Teachers used to be the gatekeepers to information, to knowledge. The successive inventions of writing and reading, print, the library, and then the World Wide Web, mean that we teachers are no longer the gatekeepers.

Schools, universities and teachers to some extent remain the gatekeepers to knowledge, the definers of what comprises valid knowledge. We do this, of course, through holding the ultimate educational power – the power to assess.

But it is not clear how long we teachers will, or should, hold this power. Increasingly, students, and employers, nations, cultures, many groups in our societies, rightly want a say in defining what is valid knowledge, a valid curriculum.

Knowledge isn’t enough

Each year, vast amounts of new knowledge are produced. Also, each year, vast amounts of current knowledge become wrong, or redundant, or both. Knowledge dies. In some subjects, a significant proportion of what was taught in the first year will have died by the time the students who learned it graduate. So, what is education for?

Machines are doing more and more of the work

The bad news is, we are getting squeezed out of work. The good news is, we are getting squeezed up, into ever more interesting work. We will be able to stay ahead for a long time; because there are always still more difficult and important and exciting things for us to do, increasingly with the support of our increasingly capable machines.

Employers want graduates to be job-ready. They also want graduates to be fluent in the five Cs: Creativity, Communication, Collaboration and Criticality as well as Competence. Not all university education currently develops the first four Cs. Very little university education currently gives high priority to their development. Rarely are they formally assessed.

Changing outcomes, changing pedagogies

The architecture of a university expresses its views about pedagogy. This remains true with the great leap online. The old pedagogic architecture – of teaching as (mainly) telling, of learning as (mainly) listening and reading, of access in and through the library to specified stored knowledge, and of assessment as (mainly) recalling, repeating back what has been learned, perhaps with some application or interpretation – has for the most part been recreated in digital form, with varying degrees of success

+++++++++++

more on online education in this IMS blog

https://blog.stcloudstate.edu/ims?s=online+education

2020 Immersive Learning Technology

https://www.jff.org/what-we-do/impact-stories/jfflabs-acceleration/2020-immersive-learning-technology/

2020-Immersion-012420 per Mark Gill’s finding

Technology is rapidly changing how we learn and grow. More and more, tools and platforms that make use of virtual reality (VR), augmented reality (AR), and extended reality (ER)—collectively known as immersive learning technology—are moving from the niche world of Silicon Valley into retail stores, warehouses, factory floors, classrooms as well as corporate education and training programs. The value is clear: these immersive learning tools help companies, training providers, and educators train workers better, faster, and more efficiently. Of course, the impact doesn’t stop at the bottom line. Immersive learning presents an opportunity to reliably train employees for situations that are expensive to support, challenging to replicate, and even dangerous. And it can be done efficiently, safely, and with better learning outcomes.

1 in every 3 small and mid-size businesses in the U.S. is expected to be piloting a VR employee training program by 2021, seeing their new hires reach full productivity 50% faster as a result.1

The worldwide AR and VR market size is forecast to grow nearly 7.7 times between 2018 and 2022.

14 million AR and VR devices are expected to be sold in 2019

By 2023, enterprise VR hardware and software revenue is expected to jump 587% to $5.5 billion, up from an estimated $800 million in 2018.

Virtual Reality VR A computer-generated experience that simulates reality. VR may include visual, auditory, or tactile experiences.

Augmented Reality AR A live experience of a physical space, where computer-enhanced visualizations, sounds, or tactile experiences overlay the real-world environment.

Mixed Reality MR A blend of virtual experiences and the real world where virtual and augmented experiences are presented simultaneously

Extended Reality ER An immersive experience involving interactions with the real world, virtual reality, augmented reality, as well as other machines or computers adding content to the experience.

Soft Skills Technical Skills Immersive learning technologies can help people develop human skills, such as empathy, customer service, improving diversity and inclusion, and other areas

Technical Skills. Immersive learning technologies enable workers to learn through simulated experiences, providing the opportunity for risk-free repetition of complex or dangerous technical tasks.

+++++++++++++

more on immersive learning in this IMS blog

https://blog.stcloudstate.edu/ims?s=immersive+learning

https://twitter.com/benhamner/status/1177755933050462208

Federated learning: train machine learning models while preserving user privacy, by keeping user data on device (e.g. mobile phone) and only sending encrypted gradient updates (that can only be decrypted in aggregate) back to the server

https://federated.withgoogle.com/

Fraunhofer-Gesellschaft June 3, 2019

https://phys.org/news/2019-06-machine-sensors.html

Researchers at the Fraunhofer Institute for Microelectronic Circuits and Systems IMS have developed AIfES, an artificial intelligence (AI) concept for microcontrollers and sensors that contains a completely configurable artificial neural network. AIfES is a platform-independent machine learning library which can be used to realize self-learning microelectronics requiring no connection to a cloud or to high-performance computers. The sensor-related AI system recognizes handwriting and gestures, enabling for example gesture control of input when the library is running on a wearable.

a machine learning library programmed in C that can run on microcontrollers, but also on other platforms such as PCs, Raspberry PI and Android.

+++++++++++++++++

more about machine learning in this IMS blog

https://blog.stcloudstate.edu/ims?s=machine+learning