Posts Tagged ‘Coding’

beginners learn Python for Data Science

My company released a course for helping beginners learn Python for Data Science. This is an initial draft and we do not plan to monetize it any way. Please feel free to help us make it better with your suggestions. from r/programming

+++++++++++

more on Python on this IMS blog

https://blog.stcloudstate.edu/ims?s=python

Rap hip-hop and physics

A Hip-Hop Experiment

JOHN LELAND NOV. 16, 2012 https://www.nytimes.com/2012/11/18/nyregion/columbia-professor-and-gza-aim-to-help-teach-science-through-hip-hop.html

Only 4 percent of African-American seniors nationally were proficient in sciences, compared with 27 percent of whites, according to the 2009 National Assessment of Educational Progress.

GZA by bringing science into hip-hop; Dr. Emdin by bringing hip-hop into the science classroom.

the popular hip-hop lyrics Web siteRap Genius, will announce a pilot project to use hip-hop to teach science in 10 New York City public schools. The pilot is small, but its architects’ goals are not modest. Dr. Emdin, who has written a book called “Urban Science Education for the Hip-Hop Generation,”

hip-hop “cypher,” participants stand in a circle and take turns rapping, often supporting or playing off one another’s rhymes.

“All of those things that are happening in the hip-hop cypher are what should happen in an ideal classroom.”

++++++++++++++++++++

Students analyze rap lyrics with code in digital humanities class

Some teachers are finding a place for coding in English, music, science, math and social studies, too

by TARA GARCÍA MATHEWSON October 18, 2018

Fifteen states now require all high schools to offer computer science courses. Twenty-three states have created K-12 computer science standards. And 40 states plus the District of Columbia allow students to count computer science courses toward high school math or science graduation requirements. That’s up from 12 states in 2013, when Code.org launched, aiming to expand access to computer science in U.S. schools and increase participation among girls and underrepresented minorities in particular.

Nevada is the only state so far to embed math, science, English language arts and social studies into its computer science standards.

coding ethics unpredictability

Franken-algorithms: the deadly consequences of unpredictable code

by Andrew Smith Thu 30 Aug 2018 01.00 EDT

https://www.theguardian.com/technology/2018/aug/29/coding-algorithms-frankenalgos-program-danger

Between the “dumb” fixed algorithms and true AI lies the problematic halfway house we’ve already entered with scarcely a thought and almost no debate, much less agreement as to aims, ethics, safety, best practice. If the algorithms around us are not yet intelligent, meaning able to independently say “that calculation/course of action doesn’t look right: I’ll do it again”, they are nonetheless starting to learn from their environments. And once an algorithm is learning, we no longer know to any degree of certainty what its rules and parameters are. At which point we can’t be certain of how it will interact with other algorithms, the physical world, or us. Where the “dumb” fixed algorithms – complex, opaque and inured to real time monitoring as they can be – are in principle predictable and interrogable, these ones are not. After a time in the wild, we no longer know what they are: they have the potential to become erratic. We might be tempted to call these “frankenalgos” – though Mary Shelley couldn’t have made this up.

Twenty years ago, George Dyson anticipated much of what is happening today in his classic book Darwin Among the Machines. The problem, he tells me, is that we’re building systems that are beyond our intellectual means to control. We believe that if a system is deterministic (acting according to fixed rules, this being the definition of an algorithm) it is predictable – and that what is predictable can be controlled. Both assumptions turn out to be wrong.“It’s proceeding on its own, in little bits and pieces,” he says. “What I was obsessed with 20 years ago that has completely taken over the world today are multicellular, metazoan digital organisms, the same way we see in biology, where you have all these pieces of code running on people’s iPhones, and collectively it acts like one multicellular organism.“There’s this old law called Ashby’s law that says a control system has to be as complex as the system it’s controlling, and we’re running into that at full speed now, with this huge push to build self-driving cars where the software has to have a complete model of everything, and almost by definition we’re not going to understand it. Because any model that we understand is gonna do the thing like run into a fire truck ’cause we forgot to put in the fire truck.”

Walsh believes this makes it more, not less, important that the public learn about programming, because the more alienated we become from it, the more it seems like magic beyond our ability to affect. When shown the definition of “algorithm” given earlier in this piece, he found it incomplete, commenting: “I would suggest the problem is that algorithm now means any large, complex decision making software system and the larger environment in which it is embedded, which makes them even more unpredictable.” A chilling thought indeed. Accordingly, he believes ethics to be the new frontier in tech, foreseeing “a golden age for philosophy” – a view with which Eugene Spafford of Purdue University, a cybersecurity expert, concurs. Where there are choices to be made, that’s where ethics comes in.

our existing system of tort law, which requires proof of intention or negligence, will need to be rethought. A dog is not held legally responsible for biting you; its owner might be, but only if the dog’s action is thought foreseeable.

model-based programming, in which machines do most of the coding work and are able to test as they go.

As we wait for a technological answer to the problem of soaring algorithmic entanglement, there are precautions we can take. Paul Wilmott, a British expert in quantitative analysis and vocal critic of high frequency trading on the stock market, wryly suggests “learning to shoot, make jam and knit”

The venerable Association for Computing Machinery has updated its code of ethics along the lines of medicine’s Hippocratic oath, to instruct computing professionals to do no harm and consider the wider impacts of their work.

+++++++++++

more on coding in this IMS blog

https://blog.stcloudstate.edu/ims?s=coding

income-share agreements (ISAs)

income-share agreements (ISAs)

https://www.linkedin.com/feed/news/a-new-way-to-get-people-into-coding-1765499/

A new way to get people into coding

https://www.theatlantic.com/education/archive/2018/06/an-alternative-to-student-loan-debt/563093/

Code Now. Pay Tuition Later.

Software Carpentry Workshop at SCSU Python

Registration is now open for the workshop:

>>>>>>>>> https://ntmoore.github.io/2018-06-02-stcloud/ <<<<<<<<<<<<<

Syllabus:

The Unix Shell

- Files and directories

- History and tab completion

- Pipes and redirection

- Looping over files

- Creating and running shell scripts

- Finding things

- Reference…

Programming in Python

- Using libraries

- Working with arrays

- Reading and plotting data

- Creating and using functions

- Loops and conditionals

- Defensive programming

- Using Python from the command line

- Reference…

https://en.wikipedia.org/wiki/GNU_nano

https://swcarpentry.github.io/shell-novice/03-create/

http://pad.software-carpentry.org/2018-06-02-stcloud

Jupyter is IDE https://en.wikipedia.org/wiki/Integrated_development_environment

https://searchcloudcomputing.techtarget.com/definition/Infrastructure-as-a-Service-IaaS

JSON file format where Jupiter data is stored. HMTL and Markdown (simplified HTML).

Panda: https://pandas.pydata.org/

React OS (JS) https://en.wikipedia.org/wiki/ReactOS

+++++++++++++++++++

more on Software Carpentry workshops on this iMS blog

https://blog.stcloudstate.edu/ims/2017/10/26/software-carpentry-workshop/

THE MODEL-VIEW-CONTROLLER (MVC)

THE MODEL-VIEW-CONTROLLER (MVC) DESIGN PATTERN

Object-oriented programming was originally developed to make code more maintainable, reusable, extensible, and understandable by encapsulating all the functionality behind well-defined interfaces.

React

React

In computing, React (sometimes styled React.js or ReactJS) is a JavaScript library[2] for building user interfaces.

It is maintained by Facebook, Instagram and a community of individual developers and corporations.[3][4][5]

React allows developers to create large web-applications that use data and can change over time without reloading the page. It aims primarily to provide speed, simplicity, and scalability. React processes only user interfaces in applications. This corresponds to View in the Model-View-Controller (MVC) pattern, and can be used in combination with other JavaScript libraries or frameworks in MVC, such as AngularJS.[6]

https://en.wikipedia.org/wiki/React_(JavaScript_library)

++++++++++++++

more on Java script in this IMS blog

https://blog.stcloudstate.edu/ims?s=java+script

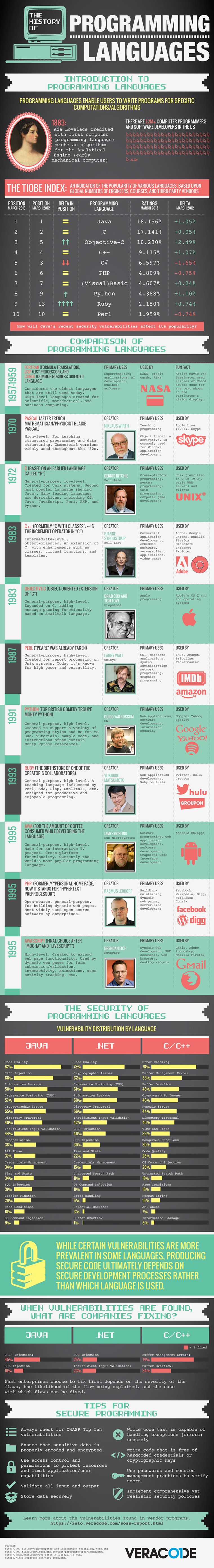

history programming languages

A Brief History of Computer Programming Languages [#Infographic]

Who contributed to the code that we use every day?

by Jimmy Daly APril 19, 2013

+++++++++++++++

more on coding in this IMS bog

https://blog.stcloudstate.edu/ims?s=coding

Software Carpentry Workshop

Minnesota State University Moorhead – Software Carpentry Workshop

Reservation code: 680510823 Reservation for: Plamen Miltenoff

Hagen Hall – 600 11th St S – Room 207 – Moorhead

pad.software-carpentry.org/2017-10-27-Moorhead

http://www.datacarpentry.org/lessons/

https://software-carpentry.org/lessons/

++++++++++++++++

Friday

Jeff – certified Bash Python, John

https://ntmoore.github.io/2017-10-27-Moorhead/

what is shall and what does it do. language close to computers, fast.

what is “bash” . cd, ls

shell job is a translator between the binory code, the middle name. several types of shells, with slight differences. one natively installed on MAC and Unix. born-again shell

bash commands: cd change director, ls – list; ls -F if it does not work: man ls (manual for LS); colon lower left corner tells you can scrool; q for escape; ls -ltr

arguments is colloquially used with different names. options, flags, parameters

cd .. – move up one directory . pwd : see the content cd data_shell/ – go down one directory

cd ~ – brings me al the way up . $HOME (universally defined variable

the default behavior of cd is to bring to home directory.

the core shall commands accept the same shell commands (letters)

$ du -h . gives me the size of the files. ctrl C to stop

$ clear . – clear the entire screen, scroll up to go back to previous command

man history $ history $! pwd (to go to pwd . $ history | grep history (piping)

$ cat (and the file name) – standard output

$ cat ../

+++++++++++++++

how to edit and delete files

to create new folder: $ mkdir . – make directory

text editors – nano, vim (UNIX text editors) . $ nano draft.txt . ctrl O (save) ctr X (exit) .

$ vim . shift esc (key) and in command line – wq (write quit) or just “q”

$ mv draft.txt ../data . (move files)

to remove $ rm thesis/: $ man rm

copy files $cp $ touch . (touches the file, creates if new)

remove $ rm . anything PSEUDO is dangerous Bash profile: cp -i

*- wild card, truncate $ ls analyzed (list of the analyized directory)

stackoverflow web site .

+++++++++++++++++

head command . $head basilisk.day (check only the first several lines of a large file

$ for filename in basilisk.dat unicorn.dat . (making a loop = multiline)

> do (expecting an action) do

> head -n 3 $filename . (3 is for the first three line of the file to be displayed and -n is for the number)

> done

for doing repetitive functions

also

$ for filename in *.dat ; do head -n 3$x; done

$ for filename in *.dat ; do echo $filename do head -n 3$x; done

$ echo $filename (print statement)

how to loop

$ for filename in *.dat ; do echo $filename ; echo head -n 3 $filename ; done

ctrl c or apple comd dot to get out of the loop

http://swcarpentry.github.io/shell-novice/02-filedir/

also

$ for filename in *.dat

> do

> $filename

> head -n 10 (first ten files ) $filename | tail -n 20 (last twenty lines)

$ for filename in *.dat

do

>> echo $filename

>> done

$ for filename in *.dat

>> do

>> cp $filename orig_$filename

>>done\

history > something else

$ head something.else

+++++++++++++

another function: word count

$ wc *.pdb (protein databank)

$ head cubane.pdb

if i don;t know how to read the outpun $ man wc

the difference between “*” and “?”

$ wc -l *.pdb

$

wc -l *.pdb > lenghts.txs

$ cat lenghts.txt

$ for fil in *.txt

>>> do

>>> wc -l $fil

by putting a $ sign use that not the actual text.

++++++++++++

$ nano middle.sh . The entire point of shell is to automate

$ bash (exectubale) to run the program middle.sh

rwx – rwx – rwx . (owner – group -anybody)

bash middle.sh

$ file middle.sh

$path .

$ echo $PATH | tr “:” “\n”

/usr/local/bin

/usr/bin

/bin

/usr/sbin

/sbin

/Applications/VMware Fusion.app/Contents/Public

/usr/local/munki

$ export PATH=$PWD:$PATH

(this is to make sure that the last version of Python is running)

$ ls ~ . (hidden files)

$ ls -a ~

$ touch .bach_profile .bashrc

$history | grep PATH

19 echo $PATH

44 echo #PATH | tr “:” “\n”

45 echo $PATH | tr “:” “\n”

46 export PATH=$PWD:$PATH

47 echo #PATH | tr “:” “\n”

48 echo #PATH | tr “:” “\n”

55 history | grep PATH

wc -l “$@” | sort -n ($@ – encompasses eerything. will process every single file in the list of files

$ chmod (make it executable)

$ find . -type d . (find only directories, recursively, )

$ find . -type f (files, instead of directories)

$ find . -name ‘*.txt’ . (find files by name, don’t forget single quotes)

$ wc -l $(find . -name ‘*.txt’) – when searching among direcories on different level

$ find . -name ‘*.txt’ | xargs wc -l – same as above ; two ways to do one and the same

+++++++++++++++++++

Saturday

Python

C and C++. scripting purposes in microbiology (instructor). libraries, packages alongside Python, which can extend its functionality. numpy and scipy (numeric and science python). Python for academic libraries?

going out of python $ quit () . python expect beginning and end parenthesis

new terminal needed after installation. anaconda 5.0.1

python 3 is complete redesign, not only an update.

http://swcarpentry.github.io/python-novice-gapminder/setup/

jupyter crashes in safari. open in chrome. spg engine maybe

https://swcarpentry.github.io/python-novice-gapminder/01-run-quit/

to start python in the terminal $ python

>> variable = 3

>> variable +10

several data types.

stored in JSON format.

command vs edit code. code cell is the gray box. a text cell is plain text

markdown syntax. format working with git and github . search explanation in https://swcarpentry.github.io/python-novice-gapminder/01-run-quit/

hackMD https://hackmd.io/ (use your GIthub account)

PANDOC – translates different data formats. https://pandoc.org/

print is a function

in what cases i will run my data trough Python instead of SPSS?

python is a 0 based language. starts counting with 0 – Java, C, P

atom_name = ‘helium ‘

print(atom_name[0]) string slicing and indexing is tricky

print(atom_name[0:6])

print(atom_name[7]) python does not know how to slice it

print(atom_name[::-1])

muillyreb muihtil muileh

len (atom_name) 6 . case sensitive

method applied it is an attribute to data that already exists. – difference from function

/Users/plamen_local/anaconda3/lib/python3.6/site-packages/pandas/__init__.py

import pandas

data = pandas.read_csv(‘/Users/plamen_local/Desktop/data/gapminder_gdp_oceania.csv’ , index_col=’country’)

data.loc[‘Australia’].plot()

plt.xticks(rotation=10)

GD plot 2 is the most well known library.

xelatex is a PDF engine. reST restructured text like Markdown. google what is the best PDF engine with Jupyter

four loops . any computer language will have the concept of “for” loop. In Python: 1. whenever we create a “for” loop, that line must end with a single colon

2. indentation. any “if” statement in the “for” loop, gets indented