free Chemistry online resources

Per the SCSU OER blog – http://blog.stcloudstate.edu/oer/2020/04/05/free-chemistry-resources/:

+++++++++++++

More on chemistry in this IMS blog

https://blog.stcloudstate.edu/ims?s=chemistry

Digital Literacy for St. Cloud State University

Per the SCSU OER blog – http://blog.stcloudstate.edu/oer/2020/04/05/free-chemistry-resources/:

+++++++++++++

More on chemistry in this IMS blog

https://blog.stcloudstate.edu/ims?s=chemistry

Alan and Rachel lead their meteorology students in AltSpaceVR

https://hybridpedagogy.org/our-bodies-encoded-algorithmic-test-proctoring-in-higher-education/

While in-person test proctoring has been used to combat test-based cheating, this can be difficult to translate to online courses. Ed-tech companies have sought to address this concern by offering to watch students take online tests, in real time, through their webcams.

Some of the more prominent companies offering these services include Proctorio, Respondus, ProctorU, HonorLock, Kryterion Global Testing Solutions, and Examity.

Algorithmic test proctoring’s settings have discriminatory consequences across multiple identities and serious privacy implications.

While racist technology calibrated for white skin isn’t new (everything from photography to soap dispensers do this), we see it deployed through face detection and facial recognition used by algorithmic proctoring systems.

While some test proctoring companies develop their own facial recognition software, most purchase software developed by other companies, but these technologies generally function similarly and have shown a consistent inability to identify people with darker skin or even tell the difference between Chinese people. Facial recognition literally encodes the invisibility of Black people and the racist stereotype that all Asian people look the same.

As Os Keyes has demonstrated, facial recognition has a terrible history with gender. This means that a software asking students to verify their identity is compromising for students who identify as trans, non-binary, or express their gender in ways counter to cis/heteronormativity.

These features and settings create a system of asymmetric surveillance and lack of accountability, things which have always created a risk for abuse and sexual harassment. Technologies like these have a long history of being abused, largely by heterosexual men at the expense of women’s bodies, privacy, and dignity.

Their promotional messaging functions similarly to dog whistle politics which is commonly used in anti-immigration rhetoric. It’s also not a coincidence that these technologies are being used to exclude people not wanted by an institution; biometrics and facial recognition have been connected to anti-immigration policies, supported by both Republican and Democratic administrations, going back to the 1990’s.

Borrowing from Henry A. Giroux, Kevin Seeber describes the pedagogy of punishment and some of its consequences in regards to higher education’s approach to plagiarism in his book chapter “The Failed Pedagogy of Punishment: Moving Discussions of Plagiarism beyond Detection and Discipline.”

my note: I am repeating this for years

Sean Michael Morris and Jesse Stommel’s ongoing critique of Turnitin, a plagiarism detection software, outlines exactly how this logic operates in ed-tech and higher education: 1) don’t trust students, 2) surveil them, 3) ignore the complexity of writing and citation, and 4) monetize the data.

Cheating is not a technological problem, but a social and pedagogical problem.

Our habit of believing that technology will solve pedagogical problems is endemic to narratives produced by the ed-tech community and, as Audrey Watters writes, is tied to the Silicon Valley culture that often funds it. Scholars have been dismantling the narrative of technological solutionism and neutrality for some time now. In her book “Algorithms of Oppression,” Safiya Umoja Noble demonstrates how the algorithms that are responsible for Google Search amplify and “reinforce oppressive social relationships and enact new modes of racial profiling.”

Anna Lauren Hoffmann, who coined the term “data violence” to describe the impact harmful technological systems have on people and how these systems retain the appearance of objectivity despite the disproportionate harm they inflict on marginalized communities.

This system of measuring bodies and behaviors, associating certain bodies and behaviors with desirability and others with inferiority, engages in what Lennard J. Davis calls the Eugenic Gaze.

Higher education is deeply complicit in the eugenics movement. Nazism borrowed many of its ideas about racial purity from the American school of eugenics, and universities were instrumental in supporting eugenics research by publishing copious literature on it, establishing endowed professorships, institutes, and scholarly societies that spearheaded eugenic research and propaganda.

+++++++++++++++++

more on privacy in this IMS blog

https://blog.stcloudstate.edu/ims?s=privacy

+++++++++++++++++

more on flipgrid in this IMS blog

https://blog.stcloudstate.edu/ims?s=flipgrid

+++++++++

https://blog.zoom.us/wordpress/2020/03/20/keep-the-party-crashers-from-crashing-your-zoom-event/

++++++++++++++

++++++++++++++++

he Intercept reported that Zoom video calls are not end-to-end encrypted, despite the company’s claims that they are.

Motherboard reports that Zoom is leaking the email addresses of “at least a few thousand” people because personal addresses are treated as if they belong to the same company

Apple was forced to step in to secure millions of Macs after a security researcher found Zoom failed to disclose that it installed a secret web server on users’ Macs, which Zoom failed to remove when the client was uninstalled

+++++++++++++

security researchers have called Zoom “a privacy disaster” and “fundamentally corrupt” as allegations of the company mishandling user data snowball.

A report from Motherboard found Zoom sends data from users of its iOS app to Facebook for advertising purposes, even if the user does not have a Facebook account.

++++++++++++++++

Zoom’s security and privacy problems are snowballing from r/technology

+++++++++++++++++++

this Tweet threads informative:

Zoom has skyrocketed to 200 million daily active users. That’s almost the size of Snapchat (218m)

The next generation of social apps will feel more like Zoom than Snapchat

— Greg Isenberg (@gregisenberg) April 2, 2020

I used to thoroughly love @zoom_us as a platform for collaborating. I still use it. But it’s not something that I would recommend to others anymore. Here’s a thread as to why:

— George Siemens (@gsiemens) April 1, 2020

+++++++++++++++++

Holding Class on Zoom? Beware of These Hacks, Hijinks and Hazards https://t.co/JO0jStW8Bm pic.twitter.com/5WC74i4o7M

— Ana Cristina Pratas (@AnaCristinaPrts) April 8, 2020

From setting a clear agenda to testing your tech setup, here’s how to make video calls more tolerable for you and your colleagues.

The Zoom app, for example, has a setting that lets hosts see if you have switched away from the Zoom app for more than 30 seconds — a dead giveaway that you aren’t paying attention.

free peer support and resources:

https://moodle4teachers.org/course/view.php?id=276

160 educators from around the world have joined the free online course for Live Online Virtual Engagement or L.O.V.E

++++++++++++++++++++

https://www.nngroup.com/articles/remote-ux/

capture qualitative insights from video recordings and think-aloud narration from users: https://lookback.io/ https://app.dscout.com/sign_in https://userbrain.net/

capture quantitative metrics such as time spent and success rate: https://konceptapp.com/

Many platforms have both qualitative and quantitative capabilities, such as UserZoom and UserTesting

White boards: https://miro.com/ and https://mural.co/

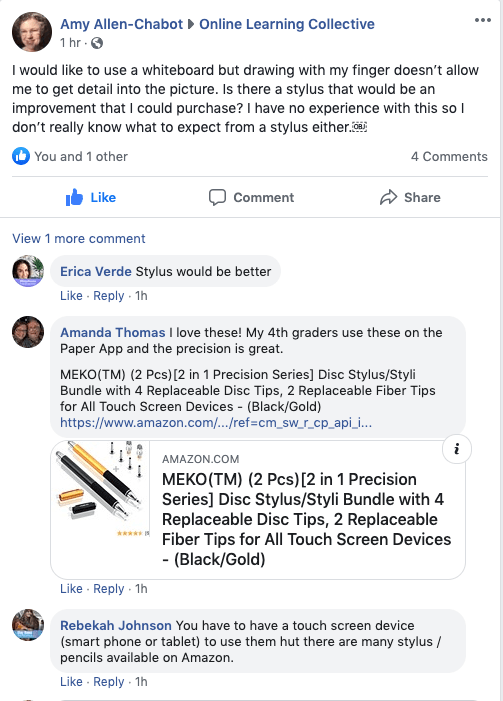

Need discussions on precision drawing using electronic devices? Talk to us, we can help

Need discussions on precision drawing using electronic devices? Talk to us, we can help

++++++++++++++++

more on stylus use in this IMS blog

https://blog.stcloudstate.edu/ims?s=stylus