Internet propaganda is becoming an industrialized commodity, warns Phil Howard, the director of the Oxford Internet…

Posted by SPIEGEL International on Friday, January 15, 2021

Posted by SPIEGEL International on Friday, January 15, 2021

Can We Develop Herd Immunity to Internet Propaganda?

Internet propaganda is becoming an industrialized commodity, warns Phil Howard, the director of the Oxford Internet Institute and author of many books on disinformation. In an interview, he calls for greater transparency and regulation of the industry.

Platforms like Parler, TheDonald, Breitbart and Anon are like petri dishes for testing out ideas, to see what sticks. If extremist influencers see that something gets traction, they ramp it up. In the language of disease, you would say these platforms act as a vector, like a germ that carries a disease into other, more public forums.

at some point a major influencer takes a new meme from one of these extremist forums and puts it out before a wider audience. It works like a vector-borne disease like malaria, where the mosquitoes do the transmission. So, maybe a Hollywood actor or an influencer who knows nothing about politics will take this idea and post it on the bigger, better known platform. From there, these memes escalate as they move from Parler to maybe Reddit and from there to Twitter, Facebook, Instagram and YouTube. We call this “cascades of misinformation.“

Sometimes the cascades of misinformation bounce from country to country between the U.S., Canada and the UK for example. So, it echoes back and forth.

Within Europe, two reservoirs for disinformation stick out: Poland and Hungary.

Our 2020 report shows that cyber troop activity continues to increase around the world. This year, we found evidence of 81 countries using social media to spread computational propaganda and disinformation about politics. This has increased from last years’ report, where we identified 70 countries with cyber troop activity.

identified 63 new instances of private firms working with governments or political parties to spread disinformation about elections or other important political issues. We identified 21 such cases in 2017-2018, yet only 15 in the period between 2009 and 2016.

Why would well-funded Russian agencies buy disinformation services from a newcomer like Nigeria?

(1) Russian actors have found a lab in Nigeria that can provide services at competitive prices. (2) But countries like China and Russia seem to be developing an interest in political influence in many African countries, so it is possible that there is a service industry for disinformation in Nigeria for that part of the world.

Each social media company should provide some kind of accounting statement about how it deals with misuse, with reporting hate speech, with fact checking and jury systems and so on. This system of transparency and accountability works for the stock markets, why shouldn’t it work in the social media realm?

We clearly need a digital civics curriculum. The 12 to 16 year olds are developing their media attitudes now, they will be voting soon. There is very good media education in Canada or the Netherlands for example, and that is an excellent long-term strategy.

++++++++++++

more on fake news in this IMS blog

https://blog.stcloudstate.edu/ims?s=fake+news

https://www.theguardian.com/society/2020/nov/29/how-to-deal-with-a-conspiracy-theorist-5g-covid-plandemic-qanon

Fake authority

You may see articles by Vernon Coleman, for instance. As a former GP he would seem to have some credentials, yet he has a history of supporting pseudoscientific ideas, including misinformation about the causes of Aids. David Icke, meanwhile, has hosted videos by Barrie Trower, an alleged expert on 5G who is, in reality, a secondary school teacher. And Piers Corbyn cites reports by the Centre for Research on Globalisation, which sounds impressive but was founded by a 9/11 conspiracy theorist.

“pre-suasion” – essentially, removing the reflexive mental blocks that might make them reject your arguments.

+++++++++++++

more on conspiracy theories in this IMS blog

https://blog.stcloudstate.edu/ims?s=conspiracy

more on fake news in this IMS blog

https://blog.stcloudstate.edu/ims?s=fake+news

https://podcasts.apple.com/us/podcast/ted-radio-hour/id523121474?i=1000496568099

False information on the internet makes it harder and harder to know what’s true, and the consequences have been devastating. This hour, TED speakers explore ideas around technology and deception. Guests include law professor Danielle Citron, journalist Andrew Marantz, and computer scientist Joy Buolamwini.

+++++++++++++

more on deep fake in this IMS blog

https://blog.stcloudstate.edu/ims?s=deepfake

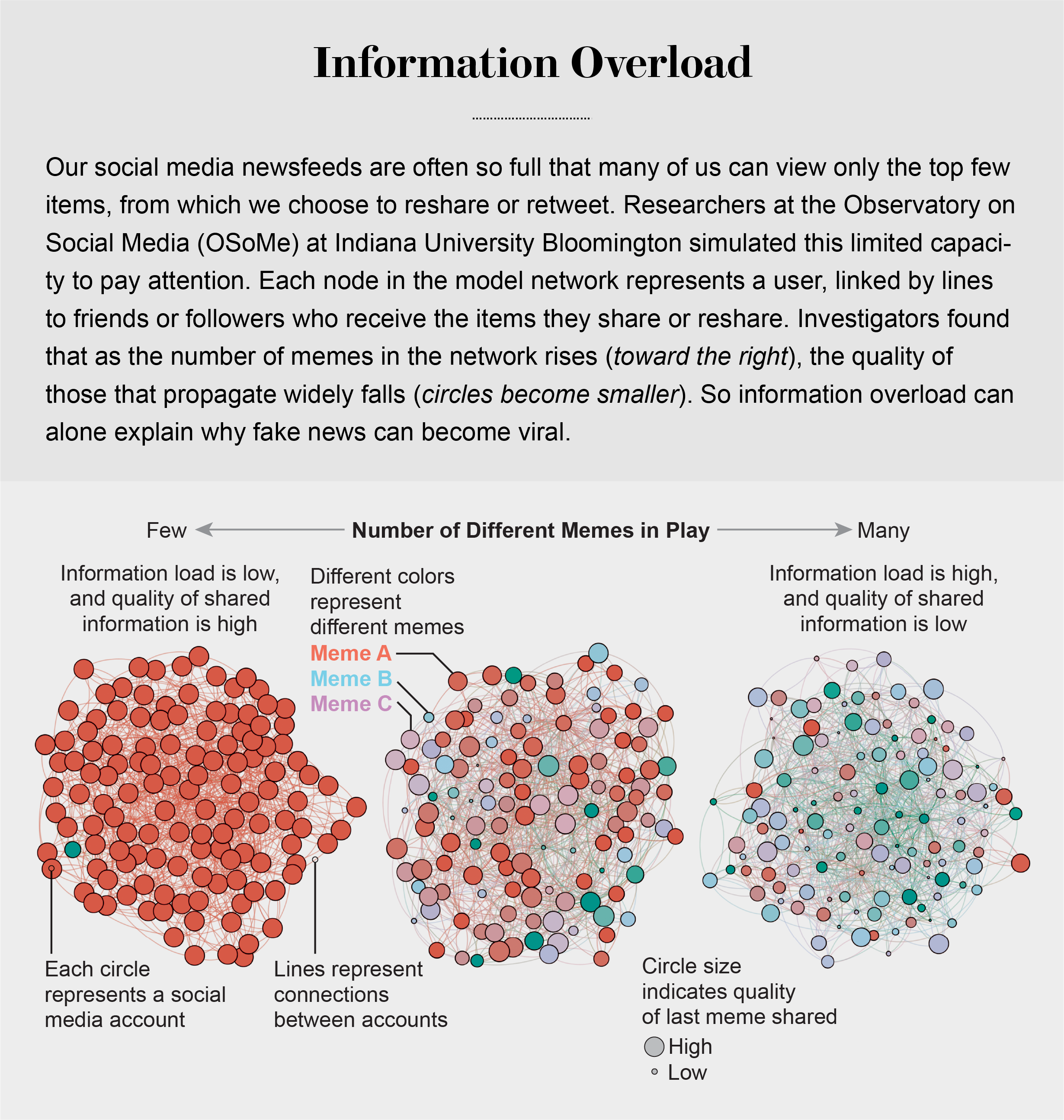

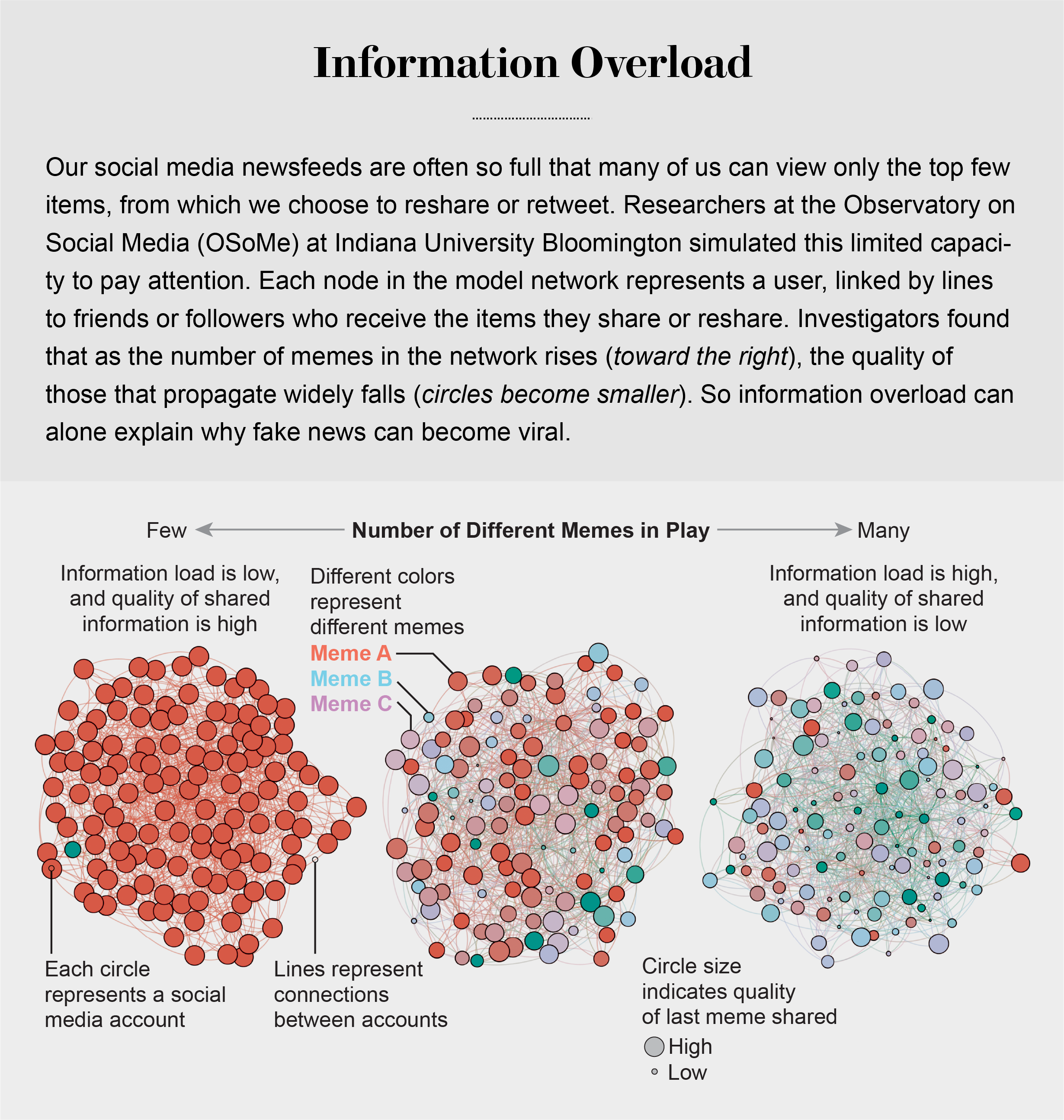

Information Overload Helps Fake News Spread, and Social Media Knows It

Understanding how algorithm manipulators exploit our cognitive vulnerabilities empowers us to fight back

https://www.scientificamerican.com/article/information-overload-helps-fake-news-spread-and-social-media-knows-it/

a minefield of cognitive biases.

People who behaved in accordance with them—for example, by staying away from the overgrown pond bank where someone said there was a viper—were more likely to survive than those who did not.

Compounding the problem is the proliferation of online information. Viewing and producing blogs, videos, tweets and other units of information called memes has become so cheap and easy that the information marketplace is inundated. My note: folksonomy in its worst.

At the University of Warwick in England and at Indiana University Bloomington’s Observatory on Social Media (OSoMe, pronounced “awesome”), our teams are using cognitive experiments, simulations, data mining and artificial intelligence to comprehend the cognitive vulnerabilities of social media users.

developing analytical and machine-learning aids to fight social media manipulation.

As Nobel Prize–winning economist and psychologist Herbert A. Simon noted, “What information consumes is rather obvious: it consumes the attention of its recipients.”

attention economy

Our models revealed that even when we want to see and share high-quality information, our inability to view everything in our news feeds inevitably leads us to share things that are partly or completely untrue.

Frederic Bartlett

Cognitive biases greatly worsen the problem.

We now know that our minds do this all the time: they adjust our understanding of new information so that it fits in with what we already know. One consequence of this so-called confirmation bias is that people often seek out, recall and understand information that best confirms what they already believe.

This tendency is extremely difficult to correct.

Making matters worse, search engines and social media platforms provide personalized recommendations based on the vast amounts of data they have about users’ past preferences.

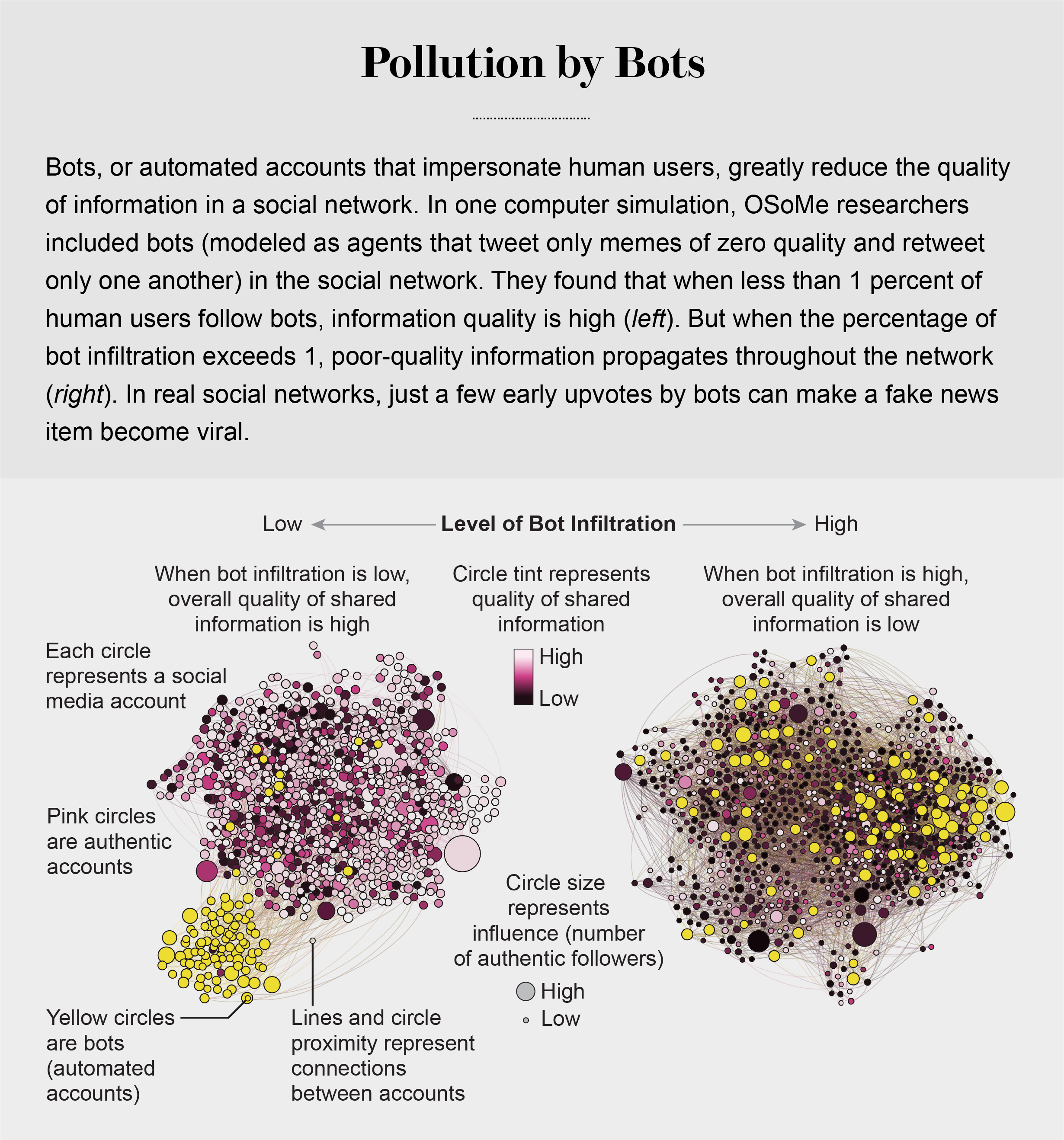

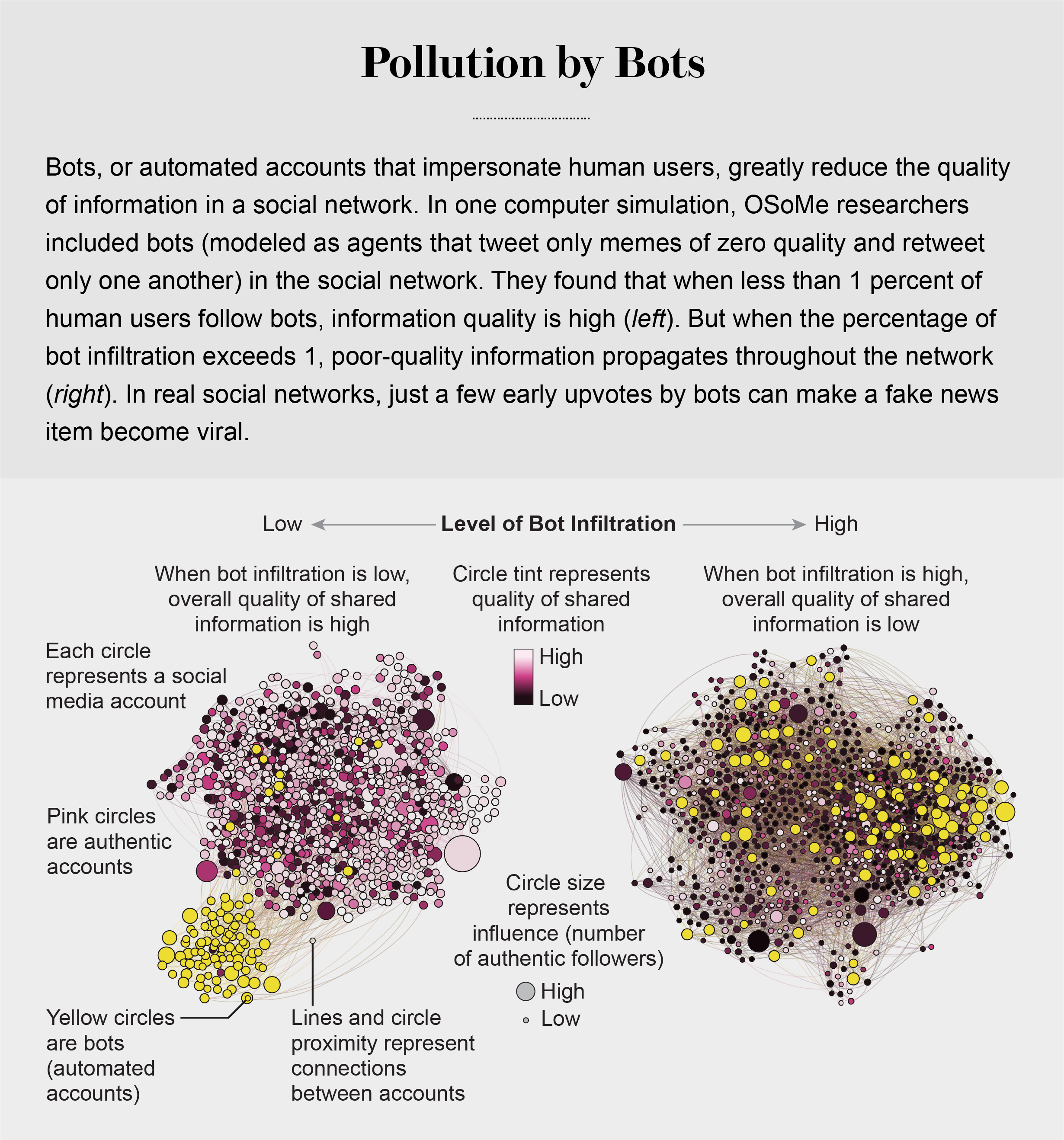

pollution by bots

Social Herding

social groups create a pressure toward conformity so powerful that it can overcome individual preferences, and by amplifying random early differences, it can cause segregated groups to diverge to extremes.

Social media follows a similar dynamic. We confuse popularity with quality and end up copying the behavior we observe.

information is transmitted via “complex contagion”: when we are repeatedly exposed to an idea, typically from many sources, we are more likely to adopt and reshare it.

In addition to showing us items that conform with our views, social media platforms such as Facebook, Twitter, YouTube and Instagram place popular content at the top of our screens and show us how many people have liked and shared something. Few of us realize that these cues do not provide independent assessments of quality.

programmers who design the algorithms for ranking memes on social media assume that the “wisdom of crowds” will quickly identify high-quality items; they use popularity as a proxy for quality. My note: again, ill-conceived folksonomy.

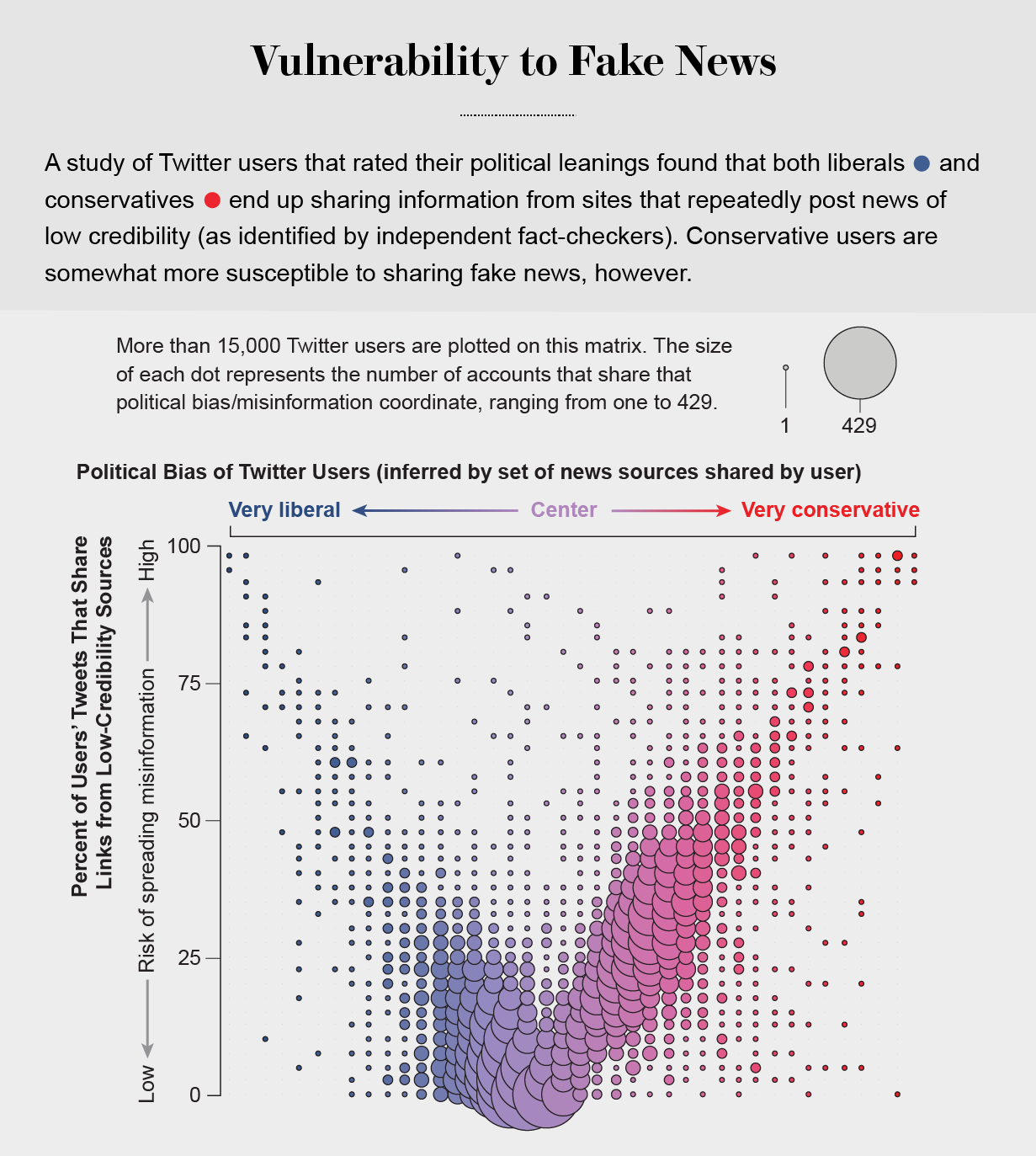

Echo Chambers

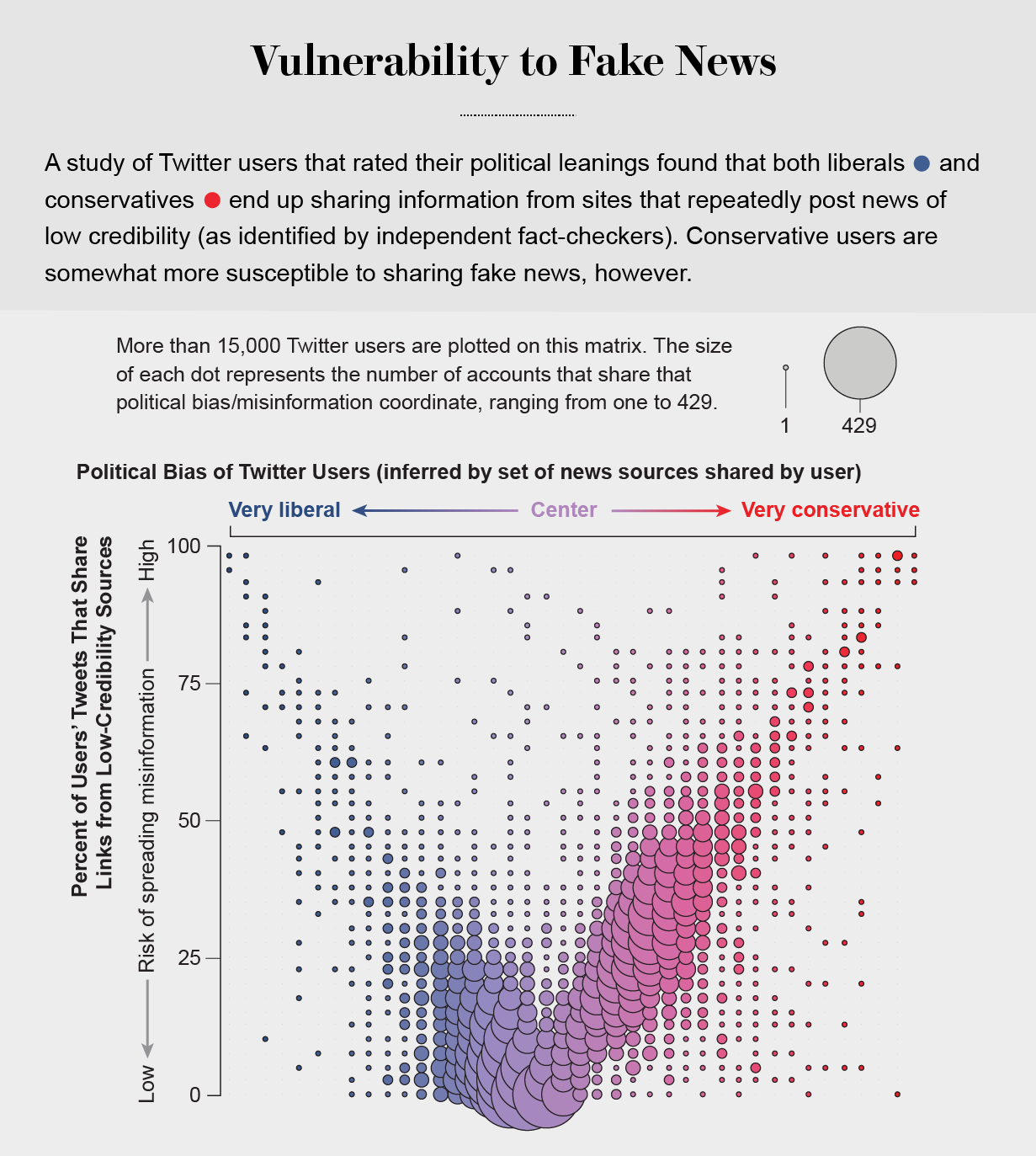

the political echo chambers on Twitter are so extreme that individual users’ political leanings can be predicted with high accuracy: you have the same opinions as the majority of your connections. This chambered structure efficiently spreads information within a community while insulating that community from other groups.

socially shared information not only bolsters our biases but also becomes more resilient to correction.

machine-learning algorithms to detect social bots. One of these, Botometer, is a public tool that extracts 1,200 features from a given Twitter account to characterize its profile, friends, social network structure, temporal activity patterns, language and other features. The program compares these characteristics with those of tens of thousands of previously identified bots to give the Twitter account a score for its likely use of automation.

Some manipulators play both sides of a divide through separate fake news sites and bots, driving political polarization or monetization by ads.

recently uncovered a network of inauthentic accounts on Twitter that were all coordinated by the same entity. Some pretended to be pro-Trump supporters of the Make America Great Again campaign, whereas others posed as Trump “resisters”; all asked for political donations.

a mobile app called Fakey that helps users learn how to spot misinformation. The game simulates a social media news feed, showing actual articles from low- and high-credibility sources. Users must decide what they can or should not share and what to fact-check. Analysis of data from Fakey confirms the prevalence of online social herding: users are more likely to share low-credibility articles when they believe that many other people have shared them.

Hoaxy, shows how any extant meme spreads through Twitter. In this visualization, nodes represent actual Twitter accounts, and links depict how retweets, quotes, mentions and replies propagate the meme from account to account.

Free communication is not free. By decreasing the cost of information, we have decreased its value and invited its adulteration.

Steve Bannon Caught Running Facebook Misinformation Network from r/technology

Steve Bannon Caught Running a Network of Misinformation Pages on Facebook

https://gizmodo.com/steve-bannon-caught-running-a-network-of-misinformation-1845633004

the Bannon-related pages tended to publish content at the same time and linked to the Populist Press, an even more right-wing Drudge Report copycat trafficking in disproven election fraud claims.

“If 2016 was an accident,” Quran added, “2020 has been negligence.”

+++++++++++++++

more on fake news in this IMS blog

https://blog.stcloudstate.edu/ims?s=fake+news