Searching for "college cost"

The Cost of an Adjunct

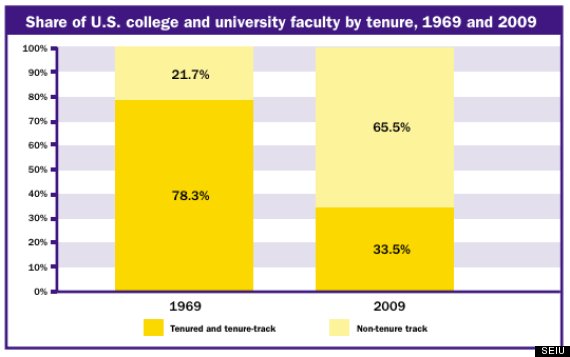

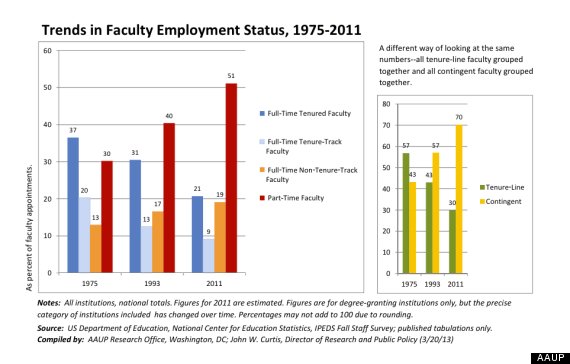

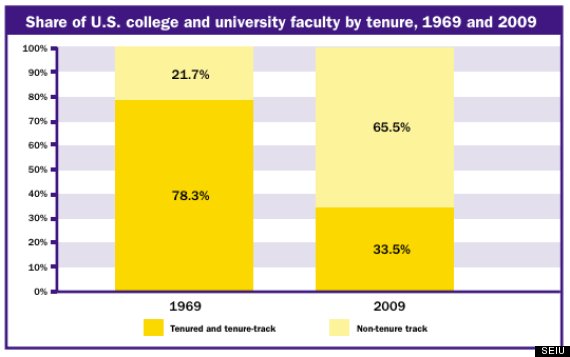

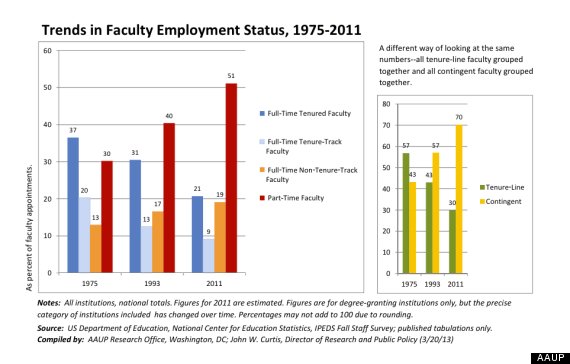

The plight of non-tenured professors is widely known, but what about the impact they have on the students they’re hired to instruct?

http://www.theatlantic.com/education/archive/2015/05/the-cost-of-an-adjunct/394091

When a college contracts ‘adjunctivitis,’ it’s the students who lose

http://www.pbs.org/newshour/making-sense/when-a-college-contracts-adjunctivitis-its-the-students-who-lose/

Here is an “apologetic” article that adjuncts are not that bad for students and learning:

Are Adjunct Professors Bad for Students?

Some doubts about a recent study suggesting that part-time faculty fail to “connect” with students.

http://www.popecenter.org/commentaries/article.html?id=2045

9 Reasons Why Being An Adjunct Faculty Member Is Terrible

http://www.huffingtonpost.com/2013/11/11/adjunct-faculty_n_4255139.html

The Adjunct Revolt: How Poor Professors Are Fighting Back

http://www.theatlantic.com/business/archive/2014/04/the-adjunct-professor-crisis/361336/

“Students aren’t getting what they pay for or, if they are, it is because adjuncts themselves are subsidizing their education,” Maria Maisto, president of the adjunct activist group New Faculty Majority, told me. “Adjuncts are donating their time; they are providing it out of pocket.”

The adjunct crisis also restricts the research output of American universities. For adjuncts scrambling between multiple short-term, poorly paid teaching jobs, producing scholarship is a luxury they cannot afford. “We have lost an entire generation of scholarship because of this,”

Adjunct Professor Salary

http://www.payscale.com/research/US/Job=Adjunct_Professor/Salary

The New Old Labor Crisis

Think being an adjunct professor is hard? Try being a black adjunct professor.

http://www.slate.com/articles/life/counter_narrative/2014/01/adjunct_crisis_in_higher_ed_an_all_too_familiar_story_for_black_faculty.html

Tumbleson, B. E., & Burke, J. (. J. (2013). Embedding librarianship in learning management systems: A how-to-do-it manual for librarians. Neal-Schuman, an imprint of the American Library Association.

|

|

https://scsu.mplus.mnpals.net/vufind/Record/007650037

see also:

Kvenild, C., & Calkins, K. (2011).

Embedded Librarians: Moving Beyond One-Shot Instruction – Books / Professional Development – Books for Academic Librarians – ALA Store. ACRL. Retrieved from

http://www.alastore.ala.org/detail.aspx?ID=3413

p. 20 Embedding Academic and Research Libraries in the Curriculum: 2014-nmc-horizon-report-library-EN

xi. the authors are convinced that LMS embedded librarianship is becoming he primary and most productive method for connecting with college and university students, who are increasingly mobile.

xii. reference librarians engage the individual, listen, discover what is wanted and seek to point the stakeholder in profitable directions.

Instruction librarians, in contrast, step into the classroom and attempt to lead a group of students in new ways of searching wanted information.

Sometimes that instruction librarian even designs curriculum and teaches their own credit course to guide information seekers in the ways of finding, evaluating, and using information published in various formats.

Librarians also work in systems, emerging technologies, and digital initiatives in order to provide infrastructure or improve access to collections and services for tend users through the library website, discovery layers, etc. Although these arenas seemingly differ, librarians work as one.

xiii. working as an LMS embedded librarian is both a proactive approach to library instruction using available technologies and enabling a 24/7 presence.

1. Embeddedness involves more that just gaining perspective. It also allows the outsider to become part of the group through shared learning experiences and goals. 3. Embedded librarianship in the LMS is all about being as close as possible to where students are receiving their assignments and gaining instruction and advice from faculty members. p. 6 When embedded librarians provide ready access to scholarly electronic collections, research databases, and Web 2.0 tools and tutorials, the research experience becomes less frustrating and more focused for students. Undergraduate associate this familiar online environment with the academic world.

p. 7 describes embedding a reference librarian, which LRS reference librarians do, “partnership with the professor.” However, there is room for “Research Consultations” (p. 8). While “One-Shot Library Instruction Sessions” and “Information Literacy Credit Courses” are addressed (p. 809), the content of these sessions remains in the old-fashioned lecturing type of delivering the information.

p. 10-11. The manuscript points out clearly the weaknesses of using a Library Web site. The authors fail to see that the efforts of the academic librarians must go beyond Web page and seek how to easy the information access by integrating the power of social media with the static information residing on the library web page.

p. 12 what becomes disturbingly clear is that faculty focus on the mechanics of the research paper over the research process. Although students are using libraries, 70 % avoid librarians. Urging academic librarians to “take an active role and initiate the dialogue with faculty to close a divide that may be growing between them and faculty and between them and students.”

Four research context with which undergraduates struggle: big picture, language, situational context and information gathering.

p. 15 ACRL standards One and Three: librarians might engage students who rely on their smartphones, while keeping in mind that “[s]tudents who retrieve information on their smartphones may also have trouble understanding or evaluating how the information on their phone is ‘produced, organized, and disseminated’ (Standard One).

Standard One by its definition seems obsolete. If information is formatted for desktops, it will be confusing when on smart phones, And by that, it is not mean to adjust the screen size, but change the information delivery from old fashioned lecturing to more constructivist forms. e.g. http://web.stcloudstate.edu/pmiltenoff/bi/

p. 15 As for Standard Two, which deals with effective search strategies, the LMS embedded librarian must go beyond Boolean operators and controlled vocabulary, since emerging technologies incorporate new means of searching. As unsuccessfully explained to me for about two years now at LRS: hashtag search, LinkedIn groups etc, QR codes, voice recognition etc.

p. 16. Standard Five. ethical and legal use of information.

p. 23 Person announced in 2011 OpenClass compete with BB, Moodle, Angel, D2L, WebCT, Sakai and other

p. 24 Common Features: content, email, discussion board, , synchronous chat and conferencing tools (Wimba and Elluminate for BB)

p. 31 information and resources which librarians could share via LMS

– post links to dbases and other resources within the course. LIB web site, LibGuides or other subject-related course guidelines

– information on research concepts can be placed in a similar fashion. brief explanation of key information literacy topics (e.g difference between scholarly and popular periodical articles, choosing or narrowing research topics, avoiding plagiarism, citing sources properly whining required citations style, understanding the merits of different types of sources (Articles book’s website etc)

– Pertinent advice the students on approaching the assignment and got to rheank needed information

– Tutorials on using databases or planning searches step-by-step screencast navigating in search and Candida bass video search of the library did you a tour of the library

p. 33 embedded librarian being copied on the blanked emails from instructor to students.

librarian monitors the discussion board

p. 35 examples: students place specific questions on the discussion board and are assured librarian to reply by a certain time

instead of F2F instruction, created a D2L module, which can be placed in any course. videos, docls, links to dbases, links to citation tools etc. Quiz, which faculty can use to asses the the students

p. 36 discussion forum just for the embedded librarian. for the students, but faculty are encouraged to monitor it and provide content- or assignment-specific input

video tutorials and searching tips

Contact information email phone active IM chat information on the library’s open hours

p. 37 questions to consider

what is the status of the embedded librarian: T2, grad assistant

p. 41 pilot program. small scale trial which is run to discover and correct potential problems before

One or two faculty members, with faculty from a single department

Pilot at Valdosta State U = a drop-in informatil session with the hope of serving the information literacy needs of distance and online students, whereas at George Washington U, librarian contacted a distance education faculty member to request embedding in his upcoming online Mater’s course

p. 43 when librarians sense that current public services are not being fully utilized, it may signal that a new approach is needed.

pilots permit tinkering. they are all about risk-taking to enhance delivery

p. 57 markeing LMS ebedded Librarianship

library collections, services and facilities because faculty may be uncertain how the service benefits their classroom teaching and learning outcomes.

my note per

“it is incumbent upon librarians to promote this new mode of information literacy instruction.” it is so passe. in the times when digital humanities is discussed and faculty across campus delves into digital humanities, which de facto absorbs digital literacy, it is shortsighted for academic librarians to still limit themselves into “information literacy,” considering that lip service is paid for for librarians being the leaders in the digital humanities movement. If academic librarians want to market themselves, they have to think broad and start with topics, which ARE of interest for the campus faculty (digital humanities included) and then “push” their agenda (information literacy). One of the reasons why academic libraries are sinking into oblivion is because they are sunk already in 1990-ish practices (information literacy) and miss the “hip” trends, which are of interest for faculty and students. The authors (also paying lip services to the 21st century necessities), remain imprisoned to archaic content. In the times, when multi (meta) literacies are discussed as the goal for library instruction, they push for more arduous marketing of limited content. Indeed, marketing is needed, but the best marketing is by delivering modern and user-sought content.

the stigma of “academic librarians keep doing what they know well, just do it better.” Lip-services to change, and life-long learning. But the truth is that the commitment to “information literacy” versus the necessity to provide multi (meta) literacites instruction (Reframing Information Literacy as a metaliteracy) is minimizing the entire idea of academic librarians reninventing themselves in the 21st century.

Here is more: NRNT-New Roles for New Times

p. 58 According to the Burke and Tumbleson national LMS embedded librarianship survey, 280 participants yielded the following data regarding embedded librarianship:

- traditional F2F LMS courses – 69%

- online courses – 70%

- hybrid courses – 54%

- undergraduate LMS courses 61%

- graduate LMS courses 42%

of those respondents in 2011, 18% had the imitative started for four or more years, which place the program in 2007. Thus, SCSU is almost a decade behind.

p. 58 promotional methods:

- word of mouth

- personal invitation by librarians

- email by librarians

- library brochures

- library blogs

four years later, the LRS reference librarians’ report https://magic.piktochart.com/output/5704744-libsmart-stats-1415 has no mentioning of online courses, less to say embedded librarianship

my note:

library blog was offered numerous times to the LRS librarians and, consequently to the LRS dean, but it was brushed away, as were brushed away the proposals for modern institutional social media approach (social media at LRS does not favor proficiency in social media but rather sees social media as learning ground for novices, as per 11:45 AM visit to LRS social media meeting of May 6, 2015). The idea of the blog advantages to static HTML page was explained in length, but it was visible that the advantages are not understood, as it is not understood the difference of Web 2.0 tools (such as social media) and Web 1.0 tools (such as static web page). The consensus among LRS staff and faculty is to keep projecting Web 1.0 ideas on Web 2.0 tools (e.g. using Facebook as a replacement of Adobe Dreamweaver: instead of learning how to create static HTML pages to broadcast static information, use Facebook for fast and dirty announcement of static information). It is flabbergasting to be rejected offering a blog to replace Web 1.0 in times when the corporate world promotes live-streaming (http://www.socialmediaexaminer.com/live-streaming-video-for-business/) as a way to promote services (academic librarians can deliver live their content)

p. 59 Marketing 2.0 in the information age is consumer-oriented. Marketing 3.0 in the values-driven era, which touches the human spirit (Kotler, Katajaya, and Setiawan 2010, 6).

The four Ps: products and services, place, price and promotion. Libraries should consider two more P’s: positioning and politics.

Mathews (2009) “library advertising should focus on the lifestyle of students. the academic library advertising to students today needs to be: “tangible, experiential, relatebale, measurable, sharable and surprising.” Leboff (2011, p. 400 agrees with Mathews: the battle in the marketplace is not longer for transaction, it is for attention. Formerly: billboards, magazines, newspapers, radio, tv, direct calls. Today: emphasize conversation, authenticity, values, establishing credibility and demonstrating expertise and knowledge by supplying good content, to enhance reputation (Leboff, 2011, 134). translated for the embedded librarians: Google goes that far; students want answers to their personal research dillemas and questions. Being a credentialed information specialist with years of experience is no longer enough to win over an admiring following. the embedded librarian must be seen as open and honest in his interaction with students.

p. 60 becoming attractive to end-users is the essential message in advertising LMS embedded librarianship. That attractivness relies upon two elements: being noticed and imparting values (Leboff, 2011, 99)

p. 61 connecting with faculty

p. 62 reaching students

- attending a synchronous chat sessions

- watching a digital tutorial

- posting a question in a discussion board

- using an instant messaging widget

be careful not to overload students with too much information. don’t make contact too frequently and be perceived as an annoyance and intruder.

p. 65. contemporary publicity and advertising is incorporating storytelling. testimonials differ from stories

p. 66 no-cost marketing. social media

low-cost marketing – print materials, fliers, bookmarks, posters, floor plans, newsletters, giveaways (pens, magnets, USB drives), events (orientations, workshops, contests, film viewings), campus media, digital media (lib web page, blogs, podcasts, social networking cites

p. 69 Instructional Content and Instructional Design

p. 70 ADDIE Model

Analysis: the requirements for the given course, assignments.

Ask instructors expectations from students vis-a-vis research or information literacy activities

students knowledge about the library already related to their assignments

which are the essential resources for this course

is this a hybrid or online course and what are the options for the librarian to interact with the students.

due date for the research assignment. what is the timeline for completing the assignment

when research tips or any other librarian help can be inserted

copy of the syllabus or any other assignment document

p. 72 discuss the course with faculty member. Analyze the instructional needs of a course. Analyze students needs. Create list of goals. E.g.: how to find navigate and use the PschInfo dbase; how to create citations in APA format; be able to identify scholarly sources and differentiate them from popular sources; know other subject-related dbases to search; be able to create a bibliography and use in-text citations in APA format

p. 74 Design (Addie)

the embedded component is a course within a course. Add pre-developed IL components to the broader content of the course. multiple means of contact information for the librarians and /or other library staff. link to dbases. link to citation guidance and or tutorial on APA citations. information on how to distinguish scholarly and popular sources. links to other dbases. information and guidance on bibliographic and in-text citations n APA either through link, content written within the course a tutorial or combination. forum or a discussion board topic to take questions. f2f lib instruction session with students

p. 76 decide which resources to focus on and which skills to teach and reinforce. focus on key resources

p. 77 development (Addie).

-building content;the “landing” page at LRS is the subject guides page. resources integrated into the assignment pages. video tutorials and screencasts

-finding existing content; google search of e.g.: “library handout narrowing topic” or “library quiz evaluating sources,” “avoiding plagiarism,” scholarly vs popular periodicals etc

-writing narrative content. p. 85

p. 87 Evaluation (Addie)

formative: to change what the embedded librarian offers to improve h/er services to students for the reminder of the course

summative at the end of the course:

p. 89 Online, F2F and Hybrid Courses

p. 97 assessment impact of embedded librarian.

what is the purpose of the assessment; who is the audience; what will focus on; what resources are available

p. 98 surveys of faculty; of students; analysis of student research assignments; focus groups of students and faculty

p. 100 assessment methods: p. 103/4 survey template

https://www.ets.org/iskills/about

https://www.projectsails.org/ (paid)

http://www.trails-9.org/

http://www.library.ualberta.ca/augustana/infolit/wassail/

p. 106 gathering LMS stats. Usability testing

examples: p. 108-9, UofFL : pre-survey and post-survey of studs perceptions of library skills, discussion forum analysis and interview with the instructor

p. 122 create an LMS module for reuse (standardized template)

p. 123 subject and course LibGuides, digital tutorials, PPTs,

research mind maps, charts, logs, or rubrics

http://creately.com/blog/wp-content/uploads/2012/12/Research-Proposal-mind-map-example.png

http://www.library.arizona.edu/help/tutorials/mindMap/sample.php (excellent)

or paper-based if needed: Concept Map Worksheet

Productivity Tools for Graduate Students: MindMapping http://libguides.gatech.edu/c.php

rubrics:

http://www.cornellcollege.edu/LIBRARY/faculty/focusing-on-assignments/tools-for-assessment/research-paper-rubric.shtml

http://gvsu.edu/library/instruction/research-guidance-rubric-for-assignment-design-4.htm

Creating Effective Information Literacy Assignments http://www.lib.jmu.edu/instruction/assignments.aspx

course handouts

guides on research concepts http://library.olivet.edu/subject-guides/english/college-writing-ii/research-concepts/

http://louisville.libguides.com/c.php

Popular versus scholar http://www.library.arizona.edu/help/tutorials/scholarly/guide.html

list of frequently asked q/s:

blog posts

banks of reference q/s

p. 124. Resistance or Receptivity

p. 133 getting admin access to LMS for the librarians.

p. 136 mobile students, dominance of born-digital resources

———————-

Summey T, Valenti S. But we don’t have an instructional designer: Designing online library instruction using isd techniques. Journal Of Library & Information Services In Distance Learning [serial online]. January 1, 2013;Available from: Scopus®, Ipswich, MA. Accessed May 11, 2015.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedselc%26AN%3dedselc.2-52.0-84869866367%26site%3deds-live%26scope%3dsite

instructional designer library instruction using ISD techniques

Shank, J. (2006). The blended librarian: A job announcement analysis of the newly emerging position of instructional design librarian. College And Research Libraries, 67(6), 515-524.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedselc%26AN%3dedselc.2-52.0-33845291135%26site%3deds-live%26scope%3dsite

The Blended Librarian_ A Job Announcement Analysis of the Newly Emerging Position of Instructional Design Librarian

Macklin, A. (2003). Theory into practice: Applying David Jonassen’s work in instructional design to instruction programs in academic libraries. College And Research Libraries, 64(6), 494-500.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedselc%26AN%3dedselc.2-52.0-7044266019%26site%3deds-live%26scope%3dsite

Theory into Practice_ Applying David Jonassen_s Work in Instructional Design to Instruction Programs in Academic Libraries

Walster, D. (1995). Using Instructional Design Theories in Library and Information Science Education. Journal of Education for Library and Information Science, (3). 239.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedsjsr%26AN%3dedsjsr.10.2307.40323743%26site%3deds-live%26scope%3dsite

Using Instructional Design Theories in Library and Information Science Education

Mackey, T. )., & Jacobson, T. ). (2011). Reframing information literacy as a metaliteracy. College And Research Libraries, 72(1), 62-78.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedselc%26AN%3dedselc.2-52.0-79955018169%26site%3deds-live%26scope%3dsite

Reframing Information Literacy as a metaliteracy

Nichols, J. (2009). The 3 directions: Situated information literacy. College And Research Libraries, 70(6), 515-530.

http://login.libproxy.stcloudstate.edu/login?qurl=http%3a%2f%2fsearch.ebscohost.com%2flogin.aspx%3fdirect%3dtrue%26db%3dedselc%26AN%3dedselc.2-52.0-73949087581%26site%3deds-live%26scope%3dsite

The 3 Directions_ Situated literacy

—————

Journal of Library & Information Services in Distance Learning (J Libr Inform Serv Dist Learn)

https://www.researchgate.net/journal/1533-290X_Journal_of_Library_Information_Services_in_Distance_Learning

http://conference.acrl.org/

http://www.loex.org/conferences.php

http://www.ala.org/lita/about/igs/distance/lit-igdl

————

https://magic.piktochart.com/output/5704744-libsmart-stats-1415

16 Startups Poised to Disrupt the Education Market

Colleges and universities are facing new competition for customers–students and their parents–from startups delivering similar goods (knowledge, credentials, prestige) more affordably and efficiently. Here’s a rundown of some of those startups.

Related story on the IMS blog:

https://blog.stcloudstate.edu/ims/2014/01/12/cms-course-management-systemsoftware-alternatives/

In a new book, The End of College: Creating the Future of Learning and the University of Everywhere, author Kevin Carey distills a brave new world in which a myriad of lower-cost solutions–most in their infancy–threaten to upend the four-year, high-tuition business model by which colleges and universities have traditionally thrived.

1. Rafter

Using cloud-based e-textbooks and course materials, Rafter helps campus bookstores digitize their offerings and keep their prices low, allowing them to regain the market share they were losing to other stores and course-materials marketplaces.

2. Piazza

Piazza is an online study room where students can anonymously ask questions to teachers and other students. The best answers get pushed to the top through repeated user endorsement.

3. InsideTrack

As do the above two companies, InsideTrack sells its services to universities. It provides highly personalized coaching to students and it helps colleges assess whether their technology and processes are equipped to measure student progress. nsideTrack recently announced a partnership with Chegg, through which it will provide its coaching services directly to students.

4. USEED

If you attended a four-year school, then you know the feeling of receiving relentless requests for alumni donations. USEED is like Kickstarter for school fundraising:

5. Course Hero

One of Inc.‘s 30-Under-30 companies from 2013, Course Hero is an online source of study guides, class notes, past exams, flash cards, and tutoring services.

6. Quizlet

This is another site offering shared learning tools from students worldwide. Quizlet

according to Tony Wan’s superb story on EdSurge.

7. The Minerva Project

Other companies on this list provide services to schools or students. The Minerva Project is, literally, a new school.

8. Dev Bootcamp

In its own way, Dev Bootcamp is also a new school. Its program allows you to become a Web developer after a 19-week course costing under $14,000.

9. The UnCollege Movement

Founded by Thiel Fellow Dale Stephens (who took $100,000 from Peter Thiel to not go to college), The UnCollege Movement provides students with a 12-month Gap Year experience for $16,000.

10. Udacity

Founded by Stanford computer science professor Sebastian Thrun, Udacity creates online classes through which companies can train employees. AT&T, for example, paid Udacity $3 million to develop a series of courses, according to The Wall Street Journal.

11. Coursera

The tagline says it all: “Free online courses from top universities.” Indeed, Coursera’s partners include prestigious universities worldwide.

12. EdX

In a nonprofit joint venture, MIT and Harvard created their own organization offering free online courses from top universities. Several other schools now offer their courses through EdX, including Berkeley, Georgetown, and the University of Texas system.

13. Carnegie Mellon University’s Open Learning Initiative

CMU’s OLI is another example of a nonprofit startup founded by a school to ward off its own potential disruption.

14. Saylor.org

Founder Michael Saylor has been musing on how technology can scale education since he himself was an undergrad at MIT in the early ’80s. Anyone, anywhere, can take courses on Saylor.org for free.

15. Open Badges

Founded by Mozilla, Open Badges is an attempt to establish “a new online standard to recognize and verify learning.”

16. Accredible

Calling itself the “future of certificate management,” Accredible is the company that provides certification services for several of the online schools on this list, including Saylor.org and Udacity.

Library Makerspaces: From Dream to Reality

Instructor: Melissa Robinson

Dates: April 6 to May 1st, 2015

Credits: 1.5 CEUs

Price: $175

http://libraryjuiceacademy.com/114-makerspaces.php

Designing a makerspace for your library is an ambitious project that requires significant staff time and energy. The economic, educational and inspirational rewards for your community and your library, however, will make it all worthwhile. This class will make the task of starting a makerspace less daunting by taking librarians step by step through the planning process. Using readings, online resources, discussions and hands-on exercises, participants will create a plan to bring a makerspace or maker activities to their libraries. Topics covered will include tools, programs, space, funding, partnerships and community outreach. This is a unique opportunity to learn in depth about one public library’s experience creating a fully-functioning makerspace, while also exploring other models for engaging libraries in the maker movement.

Melissa S. Robinson is the Senior Branch Librarian at the Peabody Institute Library’s West Branch in Peabody, Massachusetts. Melissa has over twelve years of experience in public libraries. She has a BA in political science from Merrimack College, a graduate certificate in Women in Politics and Public Policy from the University of Massachusetts Boston and a MLIS from Southern Connecticut State University. She is the co-author of Transforming Libraries, Building Communities (Scarecrow Press, 2013).

Read an interview with Melissa about this class:

http://libraryjuiceacademy.com/news/?p=733

Course Structure

This is an online class that is taught asynchronously, meaning that participants do the work on their own time as their schedules allow. The class does not meet together at any particular times, although the instructor may set up optional sychronous chat sessions. Instruction includes readings and assignments in one-week segments. Class participation is in an online forum environment.

Payment Info

You can register in this course through the first week of instruction. The “Register” button on the website goes to our credit card payment gateway, which may be used with personal or institutional credit cards. (Be sure to use the appropriate billing address). If your institution wants to pay using a purchase order, please contact us to make arrangements.

==============================

Making, Collaboration, and Community: fostering lifelong learning and innovation in a library makerspace

Tuesday, April 7, 2015 10AM-11:30AM PDT

Registration link: http://www.cla-net.org/?855

Travis Good will share insights garnered from having visited different makerspaces and Maker Faires across the country. He will explain why “making” is fundamentally important, what its affecting and why libraries are natural place to house makerspaces. Uyen Tran will discuss how without funding, she was able to turn a study room with two 3D printers into a simple makerspace that is funded and supported by the community. She will also provide strategies for working with community partners to provide free and innovative maker programs and creating a low cost/no cost library maker environment. Resources and programming ideas will also be provided for libraries with varying budgets and staffing. Upon completing this webinar, every attendee should be able to start implementing “maker” programs at their library.

Do student evaluations measure teaching effectiveness?Manager’s Choice

Mauricio Vasquez, Ph.D.Assistant Professor in MISTop Contributor

Higher Education institutions use course evaluations for a variety of purposes. They factor in retention analysis for adjuncts, tenure approval or rejection for full-time professors, even in salary bonuses and raises. But, are the results of course evaluations an objective measure of high quality scholarship in the classroom?

—————————-

-

Daniel

-

Muvaffak

-

Michael

-

Rina

-

Robert

-

Dr. Virginia

-

Barbara

-

Sri

-

Paul S

-

Bonnie

-

Pierre

-

Maria

-

David

-

Cathryn

-

Hans

-

robert

-

John

-

Simon

-

Laura

-

Dr. Pedro L.

-

Steve

-

robert

-

Cindy

-

yasir

-

yasir

-

joe

-

joe

-

Sonu

-

Dvora

-

Michael

-

Aleardo

-

George

-

Laura

-

Sethuraman

-

Edwin

-

Cesar

-

Steve

-

Diane

-

Nira

-

robert

-

Sami

-

-

Anne

-

Christa

-

Mat Jizat

-

orlando

-

Stephen

-

Allan

-

Allan

-

Olga

-

Penny

-

Robson

-

Chris

-

Steve

-

Eytan

-

Daniel

From: Miltenoff, Plamen

Sent: Wednesday, November 20, 2013 4:09 PM

To: ‘technology@lists.mnscu.edu’; ‘edgamesandsims@lists.mnscu.edu’

Cc: Oyedele, Adesegun

Subject: virtual worlds and simulations

Good afternoon

Apologies for any cross posting…

Following a request from fellow faculty at SCSU, I am interested in learning more about any possibilities for using virtual worlds and simulations opportunities [in the MnSCU system] for teaching and learning purposes.

The last I remember was a rather messy divorce between academia and Second Life (the latter accusing an educational institution of harboring SL hackers). Around that time, MnSCU dropped their SL support.

Does anybody have an idea where faculty can get low-cost if not free access to virtual worlds? Any alternatives for other simulation exercises?

Any info/feedback will be deeply appreciated.

Plamen

After Frustrations in Second Life, Colleges Look to New Virtual Worlds. February 14, 2010

—–Original Message—–

From: Weber, James E.

Sent: Wednesday, November 20, 2013 5:41 PM

To: Miltenoff, Plamen Subject: RE: virtual worlds and simulations

Hi Plamen:

I don’t use virtual worlds, but I do use a couple of simulations…

I use http://www.glo-bus.com/ extensively in my strategy class. It is a primary integrating mechanism for this capstone class.

I also use http://erpsim.hec.ca/en because it uses and illustrates SAP and process management.

http://www.goventure.net/ is one I have been looking into. Seems more flexible…

Best,

Jim

From: brock.dubbels@gmail.com [mailto:brock.dubbels@gmail.com] On Behalf Of Brock Dubbels

Sent: Wednesday, November 20, 2013 4:29 PM

To: Oyedele, Adesegun

Cc: Miltenoff, Plamen; Gaming and Simulations

Subject: Re: virtual worlds and simulations

That is fairly general

what constitutes programming skill is not just coding, but learning icon-driven actions and logic in a menu

for example, Sketch Up is free. You still have to learn how to use the interface.

there is drag and drop game software, but this is not necessarily a share simulation

From: Kalyvaki, Maria [mailto:Maria.Kalyvaki2@smsu.edu]

Sent: Wednesday, November 20, 2013 4:26 PM

To: Miltenoff, Plamen

Subject: RE: virtual worlds and simulations

Hi,

I received this email today and I am happy that someone is interested on Second Life. The second life platform and some other virtual worlds are free to use. Depends what are your expectations there that may increase the cost of using the virtual world. I am using some of those virtual worlds and my previous school Texas Tech University was using SL for a course.

Let me know how could I help you with the virtual worlds.

With appreciation,

Maria

From: Jane McKinley [mailto:Jane.McKinley@riverland.edu]

Sent: Thursday, November 21, 2013 11:09 AM

To: Miltenoff, Plamen

Cc: Jone Tiffany; Pamm Tranby; Dan Harber

Subject: Virtual worlds

Hi Plamen,

To introduce myself I am the coordinator/ specialist for our real life allied health simulation center at Riverland Community College. Dan Harber passed your message on to me. I have been actively working in SL since 2008. My goal in SL was to do simulation for nursing education. I remember when MnSCU had the island. I tried contacting the lead person at St. Paul College about building a hospital on the island for nursing that would be open to all MN programs, but never could get a response back.

Yes, SL did take the education fees away for a while but they are now back. Second Life is free in of itself, it is finding islands with educational simulations that takes time to explore, but many are free and open to the public. I do have a list of islands that may be of interest to you. They are all health related, but there are science islands such as Genome Island. Matter of fact there is a talk that will be out there tonight about how to do research and conduct fair experiments at 7:00 our time.

I have been lucky to find someone with the same goals as I have. Her name is Jone Tiffany. She is a professor at Bethel University in the nursing program. In the last 4 years we have built an island for nursing education. This consists of a hospital, clinic, office building, classrooms and a library. We also built a simulation center. (Although I accidently removed the floor and some walls in it. Our builder is getting it back together.) There is such a shortage of real mental health and public health sites that a second island is being purchased to meet this request. On that island we are going to build an inner city, urban and rural communities. This will be geared towards meeting those requests. Our law enforcement program at Riverland has voiced an interest in SL with being able to set up virtual crime scenes which could be staged anywhere on the two islands. With the catastrophic natural events and terrorist activities that have occurred recently we will replicate these same communities on the other side of the island only it will be the aftermath of a hurricane and tornado, or flooding. On the other side we could stage the aftermath of a bombing such as what happened in Boston. Victims could transported to the hospital ED. Law enforcement could do an investigation.

We have also been working with the University of Wisconsin, Osh Kosh. They have a plane crash simulation and what we call a grunge house that students go into to see what the living conditions are like for those who live in poverty and what could be done about it.

Since I am not faculty I cannot take our students out to SL, but Jone has had well over 100 of her students in there doing various assignments. She is taking more out this semester. They have done such things as family health assessments and diabetes assessment and have to create a plan of care. She has done lectures out there. So the students come out with their avatars and sit in a classroom. This is a way distant learning can be done but yet be engaged with the students. The beauty of SL is that you can be creative. Since the island is called Nightingale Isle, some of the builds are designed with that theme in mind. Such as the classrooms, they are tiered up a mountain and look like the remains of a bombed out church from the Crimean War, it is one of our favorite spots. We also have an area open on the island for support groups to meet. About 5 years ago Riverland did do a congestive heart failure simulation with another hospital in SL. That faculty person unfortunately has left so we have not been able to continue it, but the students loved it. We did the same scenario with Jones students in the sim center we have and again the students loved it.

The island is private but anyone is welcome to use it. We do this so that we know and can control who is on the island. All that is needed is to let Jone or I know who you are, where are you from (institution), and what is your avatar name. We will friend you in SL and invite you to join the group, then you have access to the island. Both Jone and I are always eager to share what all goes on out there (as you can tell by this e-mail). There is so much potential of what can be done. We have been lucky to be able to hire the builder who builds for the Mayo Clinic. Their islands are next to ours. She replicated the Gonda Building including the million dollar plus chandeliers.

I can send you the list of the health care related islands, there are about 40 of them. I also copied Jone, she can give you more information on what goes into owning an island. We have had our ups and downs with this endeavor but believe in it so much that we have persevered and have a beautiful island to show for it.

Let me if you want to talk more.

Jane (aka Tessa Finesmith-avatar name)

Jane McKinley, RN

College Lab Specialist -Riverland Center for Simulation Learning

Riverland Community College

Austin, MN 55912

jane.mckinley@riverland.edu

507-433-0551 (office)

From: Jeremy Nienow [mailto:JNienow@inverhills.mnscu.edu]

Sent: Thursday, November 21, 2013 10:11 AM

To: Miltenoff, Plamen

Cc: Sue Dion

Subject: Teaching in virtual worlds

Hello,

A friend here at IHCC sent me your request for information on teaching in low-cost virtual environments.

I like to think of myself on the cusp of gamification and I have a strong background in gaming in general (being a white male in my 30s).

Anyway – almost every MMORPG (Massive Multi-online role playing game) today is set up on a Free to Play platform for its inhabitance.

There are maybe a dozen of these out there right now from Dungeon and Dragons online, to Tera, to Neverwinter Nights…etc.

Its free to download, no subscription fee (like there used to be) and its free to play – how they get the money is they make game items and cool aspects of the game cost money…people pay for the privilege of leveling faster.

So – you could easily have all your students download the game (provided they all have a suitable system and internet access), make an avatar, start in the same place – and teach right from there.

I have thought of doing this for an all online class before, but wanted to wait till I was tenured.

Best,

Jeremy L. Nienow, PhD., RPA

Anthropology Faculty

Inver Hills Community College

P.S. Landon Pirius (sp?) who was once at IHCC and now I believe is at North Hennepin maybe… wrote his PhD on teaching in online environments and used World of Warcraft.

From: Gary Abernethy [mailto:Gary.Abernethy@minneapolis.edu]

Sent: Thursday, November 21, 2013 8:46 AM

To: Miltenoff, Plamen

Subject: Re: [technology] virtual worlds and simulations

Plamen,

The below are current options I am aware of for VW and SIM . You may also want to take a look at Kuda, in Google code, I worked at SRI when we developed this tool. I am interested in collaboration in this area.

Hope the info helps

https://www.activeworlds.com/index.html

http://www.opencobalt.org/

http://opensimulator.org/wiki/Main_Page

http://metaverse.sourceforge.net/

http://stable.kuda.googlecode.com

Gary Abernethy

Director of eLearning

Academic Affairs

Minneapolis Community and Technical College | 1501 Hennepin Avenue S. | Minneapolis, MN 55403

Phone 612-200-5579

Gary.Abernethy@minneapolis.edu | http://www.minneapolis.edu

From: John OBrien [mailto:John.OBrien@so.mnscu.edu]

Sent: Wednesday, November 20, 2013 11:37 PM

To: Miltenoff, Plamen

Subject: RE: virtual worlds and simulations

I doubt this is so helpful, but maybe: http://wiki.secondlife.com/wiki/SLED

http://diyubook.com/2013/07/the-mooc-is-dead-long-live-open-learning/

We’re at a curious point in the hype cycle of educational innovation, where the hottest concept of the past year–Massive Open Online Courses, or MOOCs–is simultaneously being discovered by the mainstream media, even as the education-focused press is declaring them dead. “More Proof MOOCs are Hot,” and “MOOCs Embraced By Top Universities,” said the Wall Street Journal and USA Today last week upon the announcement that Coursera had received a $43 million round of funding to expand its offerings;

“Beyond MOOC Hype” was the nearly simultaneous headline in Inside Higher Ed.

Can MOOCs really be growing and dying at the same time?

The best way to resolve these contradictory signals is probably to accept that the MOOC, itself still an evolving innovation, is little more than a rhetorical catchall for a set of anxieties around teaching, learning, funding and connecting higher education to the digital world. This is a moment of cultural transition. Access to higher education is strained. The prices just keep rising. Questions about relevance are growing. The idea of millions of students from around the world learning from the worlds’ most famous professors at very small marginal cost, using the latest in artificial intelligence and high-bandwidth communications, is a captivating one that has drawn tens of millions in venture capital. Yet, partnerships between MOOC platforms and public institutions like SUNY and the University of California to create self-paced blended courses and multiple paths to degrees look like a sensible next step for the MOOC, but they are far from that revolutionary future. Separate ideas like blended learning and plain old online delivery seem to be blurring with and overtaking the MOOC–even Blackboard is using the term.

The time seems to be ripe for a reconsideration of the “Massive” impact of “Online” and “Open” learning. TheReclaim Open Learning initiative is a growing community of teachers, researchers and learners in higher education dedicated to this reconsideration. Supporters include the MIT Media Lab and the MacArthur Foundation-supported Digital Media and Learning Research Hub. I am honored to be associated with the project as a documentarian and beater of the drum.

Entries are currently open for our Innovation Contest, offering a $2000 incentive to either teachers or students who have projects to transform higher education in a direction that is connected and creative, is open as in open content and open as in open access, that is participatory, that takes advantage of some of the forms and practices that the MOOC also does but is not beholden to the narrow mainstream MOOC format (referring instead to some of the earlier iterations of student-created, distributed MOOCscreated by Dave Cormier, George Siemens, Stephen Downes and others.)

Current entries include a platform to facilitate peer to peer language learning, a Skype-based open-access seminar with guests from around the world, and a student-created course in educational technology. Go hereto add your entry! Deadline is August 2. Our judges include Cathy Davidson (HASTAC), Joi Ito (MIT), and Paul Kim (Stanford).

Reclaim Open Learning earlier sponsored a hackathon at the MIT Media Lab. This fall, September 27 and 28, our judges and contest winners will join us at a series of conversations and demo days to Reclaim Open Learning at the University of California, Irvine. If you’re interested in continuing the conversation, join us there or check us out online.

http://blogs.kqed.org/mindshift/2010/10/teachers-customize-textbooks-online/

http://www.curriki.org/welcome/about-curriki/

Connexions: A place for teachers, students, and professionals to search and contribute scholarly content, organized into “modules” or topic areas instead of entire textbooks.

CK12 FlexBooks: A nonprofit that aims to reduce the cost of textbook materials by encouraging the development of what they call the “FlexBook.” Anyone can view or help create these standards-based, customizable, collaborative texts.

Shmoop: An up-and-coming collection of freely shared, expert-written content (most Shmoop authors are Ph.D.s and high school or college-level educators) with the goal of inspiring students and providing tons of free resources to teachers that include writing guides, analyses, and discussions.

MIT Open CourseWare: The Massachusetts Institute of Technology publishes nearly all of its course content on this site, from videos to lecture notes to exams, all free of charge and open to the public. Many other universities are doing the same, often using the content management system EduCommons.

Thursday, April 11, 11AM-1PM, Miller Center B-37

and/or

http://media4.stcloudstate.edu/scsu

We invite the campus community to a presentation by three vendors of Classroom Response System (CRS), AKA “clickers”:

11:00-11:30AM Poll Everywhere, Mr. Alec Nuñez

11:30-12:00PM iClikers, Mr. Jeff Howard

12:00-12:30PM Top Hat Monocle Mr. Steve Popovich

12:30-1PM Turning Technologies Mr. Jordan Ferns

links to documentation from the vendors:

http://web.stcloudstate.edu/informedia/crs/ClickerSummaryReport_NDSU.docx

http://web.stcloudstate.edu/informedia/crs/Poll%20Everywhere.docx

http://web.stcloudstate.edu/informedia/crs/tophat1.pdf

http://web.stcloudstate.edu/informedia/crs/tophat2.pdf

http://web.stcloudstate.edu/informedia/crs/turning.pdf

Top Hat Monocle docs:

http://web.stcloudstate.edu/informedia/crs/thm/FERPA.pdf

http://web.stcloudstate.edu/informedia/crs/thm/proposal.pdf

http://web.stcloudstate.edu/informedia/crs/thm/THM_CaseStudy_Eng.pdf

http://web.stcloudstate.edu/informedia/crs/thm/thm_vsCRS.pdf

iCLicker docs:

http://web.stcloudstate.edu/informedia/crs/iclicker/iclicker.pdf

http://web.stcloudstate.edu/informedia/crs/iclicker/iclicker2VPAT.pdf

http://web.stcloudstate.edu/informedia/crs/iclicker/responses.doc

| Questions to vendor: alec@polleverywhere.com |

- 1. Is your system proprietary as far as the handheld device and the operating system software?

The site and the service are the property of Poll Everywhere. We do not provide handheld devices. Participants use their own device be it a smart phone, cell phone, laptop, tablet, etc. |

- 2. Describe the scalability of your system, from small classes (20-30) to large auditorium classes. (500+).

Poll Everywhere is used daily by thousands of users. Audience sizes upwards of 500+ are not uncommon. We’ve been used for events with 30,000 simultaneous participants in the past. |

- 3. Is your system receiver/transmitter based, wi-fi based, or other?

N/A |

- 4. What is the usual process for students to register a “CRS”(or other device) for a course? List all of the possible ways a student could register their device. Could a campus offer this service rather than through your system? If so, how?

Student participants may register by filling out a form. Or, student information can be uploaded via a CSV. |

- 5. Once a “CRS” is purchased can it be used for as long as the student is enrolled in classes? Could “CRS” purchases be made available through the campus bookstore? Once a student purchases a “clicker” are they able to transfer ownership when finished with it?

N/A. Poll Everywhere sells service licenses the length and number of students supported would be outlined in a services agreement. |

- 6. Will your operating software integrate with other standard database formats? If so, list which ones.

Need more information to answer. |

- 7. Describe the support levels you provide. If you offer maintenance agreements, describe what is covered.

8am to 8pm EST native English speaking phone support and email support. |

- 8. What is your company’s history in providing this type of technology? Provide a list of higher education clients.

Company pioneered and invented the use of this technology for audience and classroom response. http://en.wikipedia.org/wiki/Poll_Everywhere. University of Notre Dame

South Bend, Indiana

University of North Carolina-Chapel Hill

Raleigh, North Carolina

University of Southern California

Los Angeles, California

San Diego State University

San Diego, California

Auburn University

Auburn, Alabama

King’s College London

London, United Kingdom

Raffles Institution

Singapore

Fayetteville State University

Fayetteville, North Carolina

Rutgers University

New Brunswick, New Jersey

Pepperdine University

Malibu, California

Texas A&M University

College Station, Texas

University of Illinois

Champaign, Illinois |

- 9. What measures does your company take to insure student data privacy? Is your system in compliance with FERPA and the Minnesota Data Practices Act? (https://www.revisor.leg.state.mn.us/statutes/?id=13&view=chapter)

Our Privacy Policy can be found here: http://www.polleverywhere.com/privacy-policy. We take privacy very seriously. |

- 10. What personal data does your company collect on students and for what purpose? Is it shared or sold to others? How is it protected?

Name. Phone Number. Email. For the purposes of voting and identification (Graded quizzes, attendance, polls, etc.). It is never shared or sold to others. |

- 11. Do any of your business partners collect personal information about students that use your technology?

No. |

- 12. With what formats can test/quiz questions be imported/exported?

Import via text. Export via CSV. |

- 13. List compatible operating systems (e.g., Windows, Macintosh, Palm, Android)?

Works via standard web technology including Safari, Chrome, Firefox, and Internet Explorer. Participant web voting fully supported on Android and IOS devices. Text message participation supported via both shortcode and longcode formats. |

- 14. What are the total costs to students including device costs and periodic or one-time operation costs

Depends on negotiated service level agreement. We offer a student pays model at $14 per year or Institutional Licensing. |

- 15. Describe your costs to the institution.

Depends on negotiated service level agreement. We offer a student pays model at $14 per year or Institutional Licensing. |

- 16. Describe how your software integrates with PowerPoint or other presentation systems.

Downloadable slides from the website for Windows PowerPoint and downloadable app for PowerPoint and Keynote integration on a Mac. |

| 17. State your level of integration with Desire2Learn (D2L)?Does the integration require a server or other additional equipment the campus must purchase?Export results from site via CSV for import into D2L. |

- 17. How does your company address disability accommodation for your product?

We follow the latest web standards best practices to make our website widely accessible by all. To make sure we live up to this, we test our website in a text-based browser called Lynx that makes sure we’re structuring our content correctly for screen readers and other assisted technologies. |

- 18. Does your software limit the number of answers per question in tests or quizzes? If so, what is the max question limit?

No. |

- 19. Does your software provide for integrating multimedia files? If so, list the file format types supported.

Supports image formats (.PNG, .GIF, .JPG). |

- 20. What has been your historic schedule for software releases and what pricing mechanism do you make available to your clients for upgrading?

We ship new code daily. New features are released several times a year depending on when we finish them. New features are released to the website for use by all subscribers. |

- 21. Describe your “CRS”(s).

Poll Everywhere is a web based classroom response system that allows students to participate from their existing devices. No expensive hardware “clickers” are required. More information can be found at http://www.polleverywhere.com/classroom-response-system. |

- 22. If applicable, what is the average life span of a battery in your device and what battery type does it take?

N/A. Battery manufacturers hate us. Thirty percent of their annual profits can be contributed to their use in clickers (we made that up). |

- 23. Does your system automatically save upon shutdown?

Our is a “cloud based” system. User data is stored there even when your computer is not on. |

- 24. What is your company’s projection/vision for this technology in the near and far term.

We want to take clicker companies out of business. We think it’s ridiculous to charge students and institutions a premium for outdated technology when existing devices and standard web technology can be used instead for less than a tenth of the price. |

- 25. Does any of your software/apps require administrator permission to install?

No. |

- 26. If your system is radio frequency based, what frequency spectrum does it operate in? If the system operate in the 2.4-2.5 ghz. spectrum, have you tested to insure that smart phones, wireless tablet’s and laptops and 2.4 ghz. wireless phones do not affect your system? If so, what are the results of those tests?

No. |

- 27. What impact to the wireless network does the solution have?

Depends on a variety of factors. Most university wireless networks are capable of supporting Poll Everywhere. Poll Everywhere can also make use of cell phone carrier infrastructure through SMS and data networks on the students phones. |

- 28. Can the audience response system be used spontaneously for polling?

Yes. |

- 29. Can quiz questions and response distributions be imported and exported from and to plaintext or a portable format? (motivated by assessment & accreditation requirements).

Yes. |

- 30. Is there a requirement that a portion of the course grade be based on the audience response system?

No. |

Gloria Sheldon

MSU Moorhead

Fall 2011 Student Response System Pilot

Summary Report

NDSU has been standardized on a single student response (i.e., “clicker”) system for over a decade, with the intent to provide a reliable system for students and faculty that can be effectively and efficiently supported by ITS. In April 2011, Instructional Services made the decision to explore other response options and to identify a suitable replacement product for the previously used e-Instruction Personal Response System (PRS). At the time, PRS was laden with technical problems that rendered the system ineffective and unsupportable. That system also had a steep learning curve, was difficult to navigate, and was unnecessarily time-consuming to use. In fact, many universities across the U.S. experienced similar problems with PRS and have since then adopted alternative systems.

A pilot to explore alternative response systems was initiated at NDSU in fall 2011. The pilot was aimed at further investigating two systems—Turning Technologies and iClicker—in realistic classroom environments. As part of this pilot program, each company agreed to supply required hardware and software at no cost to faculty or students. Each vendor also visited campus to demonstrate their product to faculty, students and staff.

An open invitation to participate in the pilot was extended to all NDSU faculty on a first come, first serve basis. Of those who indicated interest, 12 were included as participants in this pilot.

Pilot Faculty Participants:

- Angela Hodgson (Biological Sciences)

- Ed Deckard (AES Plant Science)

- Mary Wright (Nursing)

- Larry Peterson (History, Philosophy & Religious Studies)

- Ronald Degges (Statistics)

- Julia Bowsher (Biological Sciences)

- Sanku Mallik (Pharmaceutical Sciences)

- Adnan Akyuz (AES School of Natural Resource Sciences)

- Lonnie Hass (Mathematics)

- Nancy Lilleberg (ITS/Communications)

- Lisa Montplaisir (Biological Sciences)

- Lioudmila Kryjevskaia (Physics)

Pilot Overview

The pilot included three components: 1) Vendor demonstrations, 2) in-class testing of the two systems, and 3) side-by-side faculty demonstrations of the two systems.

After exploring several systems, Instructional Services narrowed down to two viable options—Turning Technologies and iClicker. Both of these systems met initial criteria that was assembled based on faculty input and previous usage of the existing response system. These criteria included durability, reliability, ease of use, radio frequency transmission, integration with Blackboard LMS, cross-platform compatibility (Mac, PC), stand-alone software (i.e., no longer tied to PowerPoint or other programs), multiple answer formats (including multiple choice, true/false, numeric), potential to migrate to mobile/Web solutions at some point in the future, and cost to students and the university.

In the first stage of the pilot, both vendors were invited to campus to demonstrate their respective technologies. These presentations took place during spring semester 2011 and were attended by faculty, staff and students. The purpose of these presentations was to introduce both systems and provide faculty, staff, and students with an opportunity to take a more hands-on look at the systems and provide their initial feedback.

In the second stage of the pilot, faculty were invited to test the technologies in their classes during fall semester 2011. Both vendors supplied required hardware and software at no cost to faculty and students, and both provided online training to orient faculty to their respective system. Additionally, Instructional Services staff provided follow-up support and training throughout the pilot program. Both vendors were requested to ensure system integration with Blackboard. Both vendors indicated that they would provide the number of clickers necessary to test the systems equally across campus. Both clickers were allocated to courses of varying sizes, ranging from 9 to 400+ students, to test viability in various facilities with differing numbers of users. Participating faculty agreed to offer personal feedback and collect feedback from students regarding experiences with the systems at the end of the pilot.

In the final stage of the pilot, Instructional Services facilitated a side-by-side demonstration led by two faculty members. Each faculty member showcased each product on a function-by-function basis so that attendees were able to easily compare and contrast the two systems. Feedback was collected from attendees.

Results of Pilot

In stage one, we established that both systems were viable and appeared to offer similar features, functions, and were compatible with existing IT systems at NDSU. The determination was made to include both products in a larger classroom trial.

In stage two, we discovered that both systems largely functioned as intended; however, several differences between the technologies in terms of advantages and disadvantages were discovered that influenced our final recommendation. (See Appendix A for a list of these advantages, disadvantages, and potential workarounds.) We also encountered two significant issues that altered the course of the pilot. Initially, it was intended that both systems would be tested in equal number in terms of courses and students. Unfortunately, at the time of the pilot, iClicker was not able to provide more than 675 clickers, which was far fewer than anticipated. Turning Technologies was able to provide 1,395 clickers. As a result, Turning Technologies was used by a larger number of faculty and students across campus.

At the beginning of the pilot, Blackboard integration with iClicker at NDSU was not functional. The iClicker vendor provided troubleshooting assistance immediately, but the problem was not resolved until mid-November. As a result, iClicker users had to use alternative solutions for registering clickers and uploading points to Blackboard for student viewing. Turning Technologies was functional and fully integrated with Blackboard throughout the pilot.

During the span of the pilot additional minor issues were discovered with both systems. A faulty iClicker receiver slightly delayed the effective start date of clicker use in one course. The vendor responded by sending a new receiver, however it was an incorrect model. Instructional Services temporarily exchanged receivers with another member of the pilot group until a functional replacement arrived. Similarly, a Turning Technologies receiver was received with outdated firmware. Turning Technologies support staff identified the problem and assisted in updating the firmware with an update tool located on their website. A faculty participant discovered a software flaw in the iClicker software that hides the software toolbar when disconnecting a laptop from a second monitor. iClicker technical support assisted in identifying the problem and stated the problem would be addressed in a future software update. A workaround was identified that mitigated this problem for the remainder of the pilot. It is important to note that these issues were not widespread and did not widely affect all pilot users, however these issues attest to the need for timely, reliable, and effective vendor support.

Students and faculty reported positive experiences with both technologies throughout the semester. Based on feedback, users of both systems found the new technologies to be much improved over the previous PRS system, indicating that adopting either technology would be perceived as an upgrade among students and faculty. Faculty pilot testers met several times during the semester to discuss their experiences with each system; feedback was sent to each vendor for their comments, suggestions, and solutions.

During the stage three demonstrations, feedback from attendees focused on the inability for iClicker to integrate with Blackboard at that time and the substantial differences between the two systems in terms of entering numeric values (i.e., Turning Technologies has numeric buttons, while iClicker requires the use of a directional key pad to scroll through numeric characters). Feedback indicated that attendees perceived Turning Technologies’ clickers to be much more efficient for submitting numeric responses. Feedback regarding other functionalities indicated relative equality between both systems.

Recommendation

Based on the findings of this pilot, Instructional Services recommends that NDSU IT adopt Turning Technologies as the replacement for the existing PRS system. While both pilot-tested systems are viable solutions, Turning Technologies appears to meet the needs of a larger user base. Additionally, the support offered by Turning Technologies was more timely and effective throughout the pilot. With the limited resources of IT, vendor support is critical and was a major reason for exploring alternative student response technologies.

From Instructional Services’ standpoint, standardizing to one solution is imperative for two major reasons: cost efficiency for students (i.e., preventing students from having to purchase duplicate technologies) and efficient utilization of IT resources (i.e., support and training). It is important to note that this recommendation is based on the opinion of the Instructional Services staff and the majority of pilot testers, but is not based on consensus among all participating faculty and staff. It is possible that individual faculty members may elect to use other options that best meet their individual teaching needs, including (but not limited to) iClicker. As an IT organization, we continue to support technology that serves faculty, student and staff needs across various colleges, disciplines, and courses. We feel that this pilot was effective in determining the student response technology—Turning Technologies—that will best serve NDSU faculty, students and staff for the foreseeable future.

Once a final decision concerning standardization is made, contract negotiations should begin in earnest with the goal of completion by January 1, 2012, in order to accommodate those wishing to use clickers during the spring session.

Appendix A: Clicker Comparisons

Turning Technologies and iClicker

Areas where both products have comparable functionality:

- Setting up the receiver and software

- Student registration of clickers

- Software interface floats above other software

- Can use with anything – PowerPoint, Websites, Word, etc.

- Asking questions on the fly

- Can create questions / answers files

- Managing scores and data

- Allow participation points, points for correct answer, change correct answer

- Reporting – Summary and Detailed

- Uploading scores and data to Blackboard (but there was a big delay with the iClicker product)

- Durability of the receivers and clickers

- Free software

- Offer mobile web device product to go “clickerless”

Areas where the products differ:

Main Shortcomings of Turning Technology Product:

- Costs $5 more – no workaround

- Doesn’t have instructor readout window on receiver base –

- This is a handy function in iClicker that lets the instructor see the %’s of votes as they come in, allowing the instructor to plan how he/she will proceed.

- Workaround: As the time winds down to answer the question, the question and answers are displayed on the screen. Intermittently, the instructor would push a button to mute the projector, push a button to view graph results quickly, then push a button to hide graph and push a button to unmute the projector. In summary, push four buttons quickly each time you want to see the feedback, and the students will see a black screen momentarily.

- Processing multiple sessions when uploading grading –

- Turning Technologies uses their own file structure types, but iClicker uses comma-separated-value text files which work easily with Excel

- Workaround: When uploading grades into Blackboard, upload them one session at a time, and use a calculated total column in Bb to combine them. Ideally, instructors would upload the grades daily or weekly to avoid backlog of sessions.

Main Shortcomings of iClicker Product:

- Entering numeric answers –

- Questions that use numeric answers are widely used in Math and the sciences. Instead of choosing a multiple-choice answer, students solve the problem and enter the actual numeric answer, which can include numbers and symbols.

- Workaround: Students push mode button and use directional pad to scroll up and down through a list of numbers, letters and symbols to choose each character individually from left to right. Then they must submit the answer.

- Number of multiple choice answers –

- iClicker has 5 buttons on the transmitter for direct answer choices and Turning Technologies has 10.

- Workaround: Similar to numeric answer workaround. Once again the simpler transmitter becomes complex for the students.

- Potential Vendor Support Problems –

- It took iClicker over 3 months to get their grade upload interface working with NDSU’s Blackboard system. The Turning Technology interface worked right away. No workaround.